6 Graph Feature Engineering Techniques

Must-know for building GNNs.

Like images, text, and tabular datasets have features, so do graph datasets.

This means when building models on graph datasets, we can engineer these features to achieve better performance.

Today, let me share 6 must-know techniques to do so.

Let’s begin!

Note: In case you missed it, we have already done an extensive and beginner-friendly crash course on graph neural networks (with implementations):

Part 1: A Crash Course on GNNs – Part 1

Part 2: A Crash Course on GNNs – Part 2.

Part 3: A Crash Course on GNNs – Part 3.

Dummy dataset

Before we start, let’s create a dummy social networking graph dataset with accounts and followers (which will also be accounts).

To achieve this, we first create the two DataFrames shown below, an accounts DataFrame and a followers DataFrame:

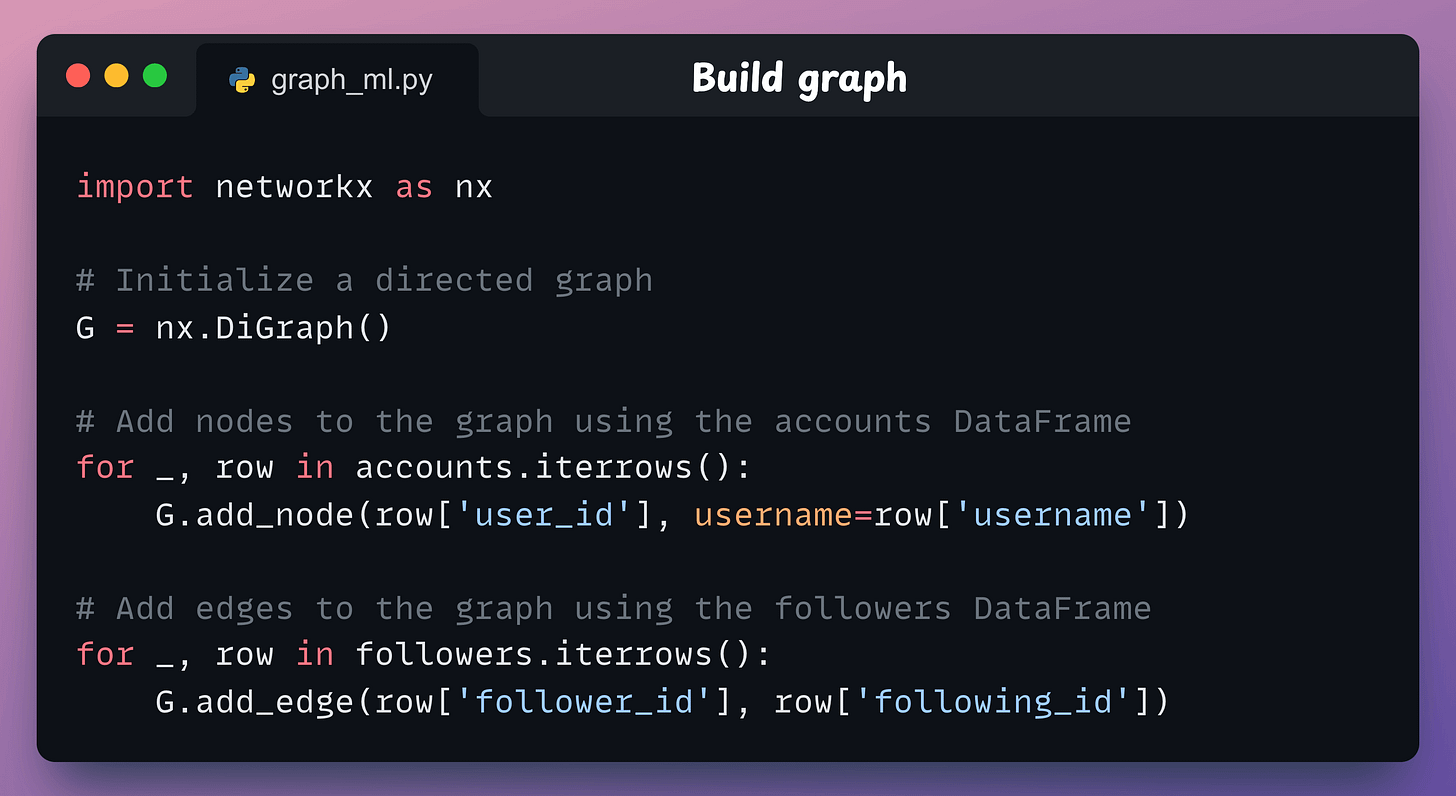

This is tabular, but we need to convert this into a graph format. To do this, we use networkx as follows:

We initialize a directed graph

G.Next, we add nodes to the graph using the

accountsDataFrame.Finally, we added edges between the nodes using the

followersDataFrame. These are directed edges from the follower to the user they are following.

This produces the following graph:

With that, we are now ready for feature engineering.

1-3) Node degree

Node degree is the most straightforward feature you can derive from a graph. In a directed graph (like above), there are two types of degrees:

In-Degree: The number of incoming edges (followers) a node has.

A high in-degree can indicate that a user is influential.

Out-Degree: The number of outgoing edges (followings) a node has.

A high out-degree can indicate that a user is very active (could also be spamming).

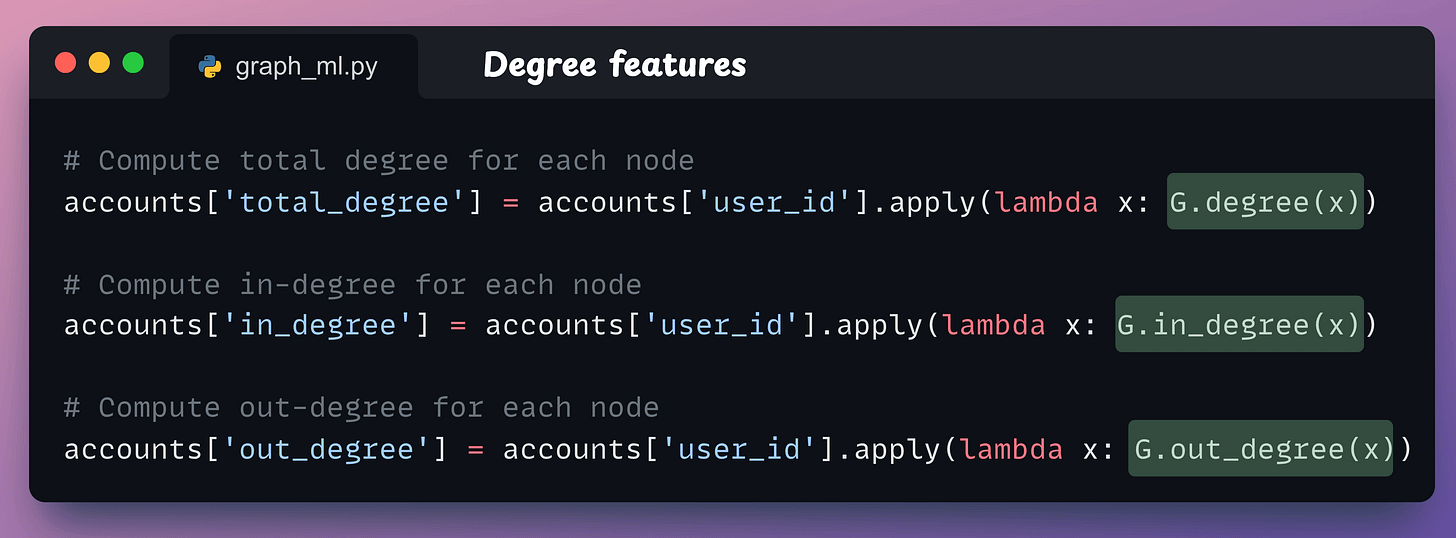

Here’s how we can compute them using NetworkX:

G.in_degree(x)counts edges directed toward the nodex.G.out_degree(x)counts edges directed away from the nodex.G.degree(x)is the sum of the in-degree and out-degree of the nodex.

These features are now part of the accounts DataFrame as depicted below:

4-6) Node centrality

Node degree features capture connectedness. However, they do not necessarily provide insights into the influence of those connections.

For instance, on a social networking site, a user might have many online friends (high degree). But we know that many users are just habitual of sending requests to everyone but they are not “well-connected” to anyone.

Thus, degree features do not fully capture the user’s influence.

Centrality features handle this; that is why the name “centrality.” In other words, how “central” a node is within the graph.

Betweenness centrality

This measures how often a node appears on the shortest paths between other nodes.

The rationale is that if a node often acts as a “bridge” between other nodes, it plays a key role in facilitating information flow.

For instance, in the following graph, the yellow node has a high betweenness centrality since it always lies on the shortest path between any two nodes.

Closeness centrality

This indicates how close a node is to all other nodes in the network based on the shortest paths.

If a node with high closeness centrality can quickly interact with others, it depicts that it can spread information efficiently across the network.

To compute closeness centrality for a node v, we sum the shortest path length from v to all other nodes and take its reciprocal:

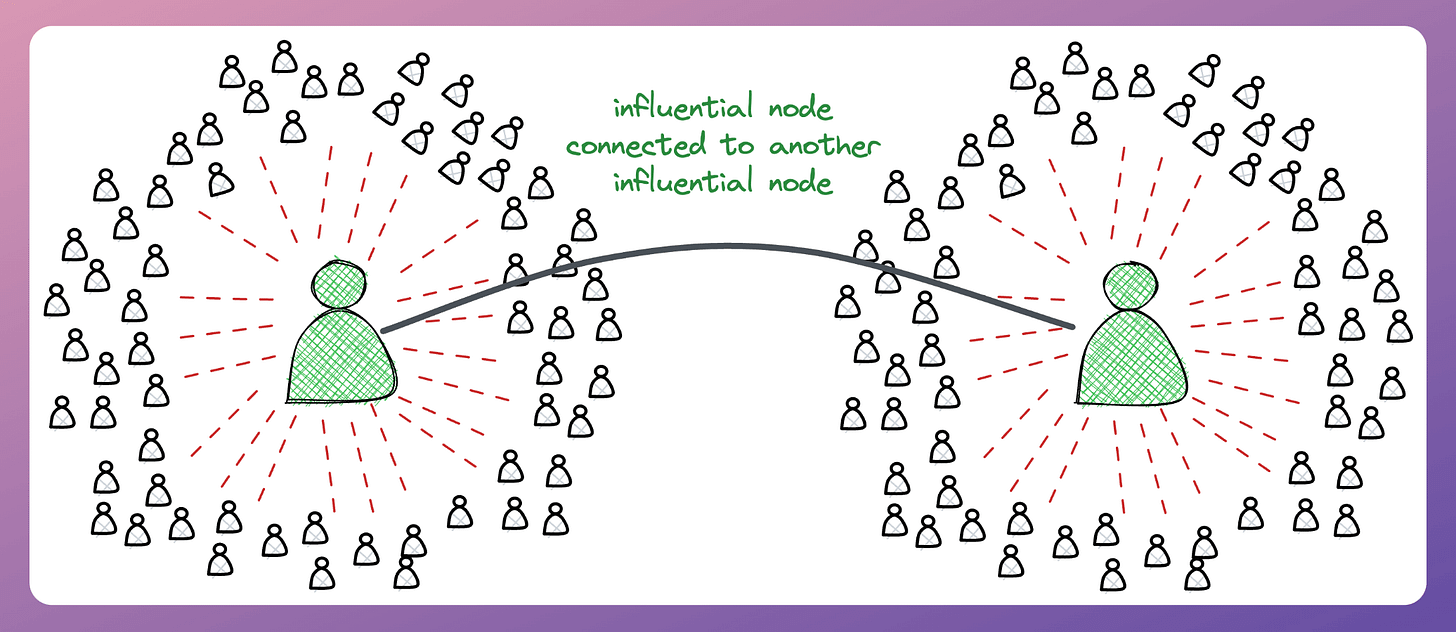

Eigenvector centrality

This goes beyond just the number of connections a node has and looks at the influence of those connections.

If a node with high eigenvector centrality is connected to other influential nodes, it amplifies its own influence.

It helps identify nodes that are influential not only due to their direct ties but also due to their connections with other influential nodes.

We covered the implementations of node centrality in the extensive and beginner-friendly crash course on graph neural networks (with implementations):

Part 1: A Crash Course on GNNs – Part 1

Part 2: A Crash Course on GNNs – Part 2.

Part 3: A Crash Course on GNNs – Part 3.

Here’s why you should care about GraphML:

Google Maps uses graph ML for ETA prediction.

Pinterest uses graph ML (PingSage) for recommendations.

Netflix uses graph ML (SemanticGNN) for recommendations.

Spotify uses graph ML (HGNNs) for audiobook recommendations.

Uber Eats uses graph ML (a GraphSAGE variant) to suggest dishes, restaurants, etc.

The list could go on since almost every major tech company I know employs graph ML in some capacity.

Becoming proficient in graph machine learning now seems to be as crucial as traditional deep learning to differentiate your profile and aim for these positions.

We cover:

Background of GNNs and their benefits.

Type of tasks for GNNs.

Data challenges in GNNs.

Frameworks to build GNNs.

Advanced architectures to build robust GNNs.

Feature engineering methods.

Practical demos.

Insights and some best practices.

Over to you: What are some graph feature engineering methods?

P.S. For those wanting to develop “Industry ML” expertise:

At the end of the day, all businesses care about impact. That’s it!

Can you reduce costs?

Drive revenue?

Can you scale ML models?

Predict trends before they happen?

We have discussed several other topics (with implementations) in the past that align with such topics.

Here are some of them:

Learn techniques to run large models on small devices: Quantization: Optimize ML Models to Run Them on Tiny Hardware

Learn how to generate prediction intervals or sets with strong statistical guarantees for increasing trust: Conformal Predictions: Build Confidence in Your ML Model’s Predictions.

Learn how to identify causal relationships and answer business questions: A Crash Course on Causality – Part 1

Learn how to scale ML model training: A Practical Guide to Scaling ML Model Training.

Learn techniques to reliably roll out new models in production: 5 Must-Know Ways to Test ML Models in Production (Implementation Included)

Learn how to build privacy-first ML systems: Federated Learning: A Critical Step Towards Privacy-Preserving Machine Learning.

Learn how to compress ML models and reduce costs: Model Compression: A Critical Step Towards Efficient Machine Learning.

All these resources will help you cultivate key skills that businesses and companies care about the most.

SPONSOR US

Get your product in front of 100,000 data scientists and other tech professionals.

Our newsletter puts your products and services directly in front of an audience that matters — thousands of leaders, senior data scientists, machine learning engineers, data analysts, etc., who have influence over significant tech decisions and big purchases.

To ensure your product reaches this influential audience, reserve your space here or reply to this email to ensure your product reaches this influential audience.

I’m still only a first year student in comp sci. Are there any fundamentals I should cover before diving into these skills?