A Foundational Guide to Evaluation of LLM Apps (Part B)

Understanding model benchmarks, LLM application evaluation, and tooling.

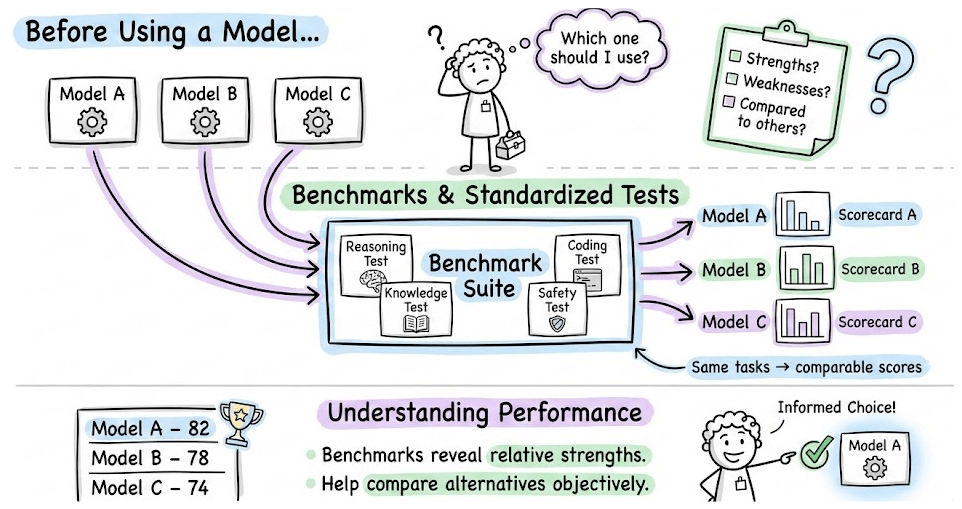

Part 10 of the full LLMOps course is now available, where we cover evaluation benchmarks in LLM applications, dive deeper into task-specific methodologies, and understand the core tooling for evaluation of LLM apps.

It also covers hands-on code demos to make the evaluation feel concrete with the DeepEval open-source framework to make these concrete for you.

Why care?

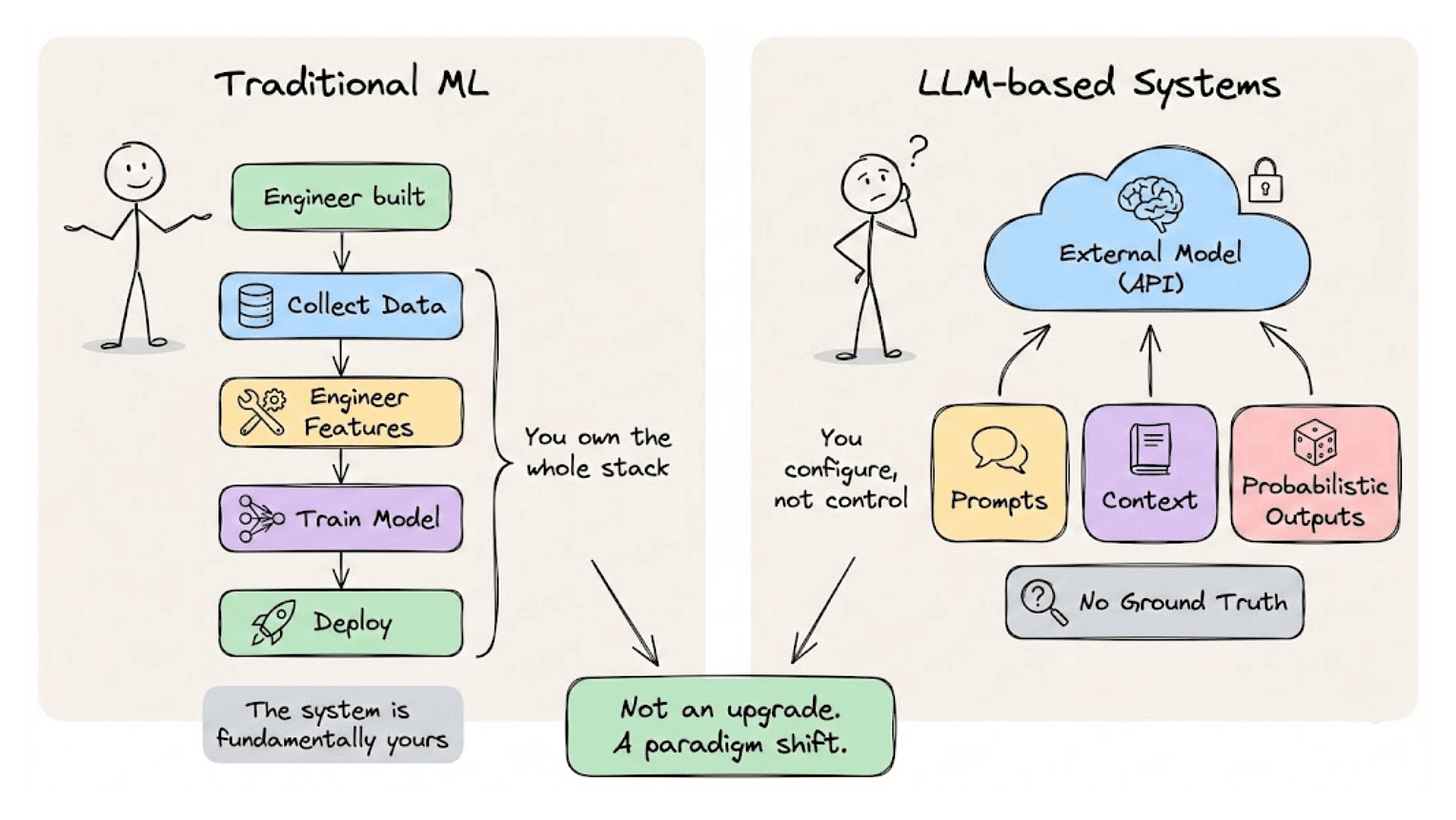

The transition from traditional ML to LLM-based systems is often framed as an upgrade, but it’s more accurate to call it a paradigm shift.

In the traditional ML world, engineers owned the entire lifecycle. They collected data, engineered features, trained models, and deployed artifacts they understood inside out. The practices of MLOps emerged to bring discipline to this lifecycle, and everything worked well because the system was fundamentally theirs.

LLM-based applications operate under a different set of assumptions. The model is often external. The behavior is shaped through prompts and context rather than training loops. The outputs are probabilistic, and evaluation becomes surprisingly difficult when there’s no single correct answer to compare against.

These differences have real consequences for how production systems need to be built and maintained.

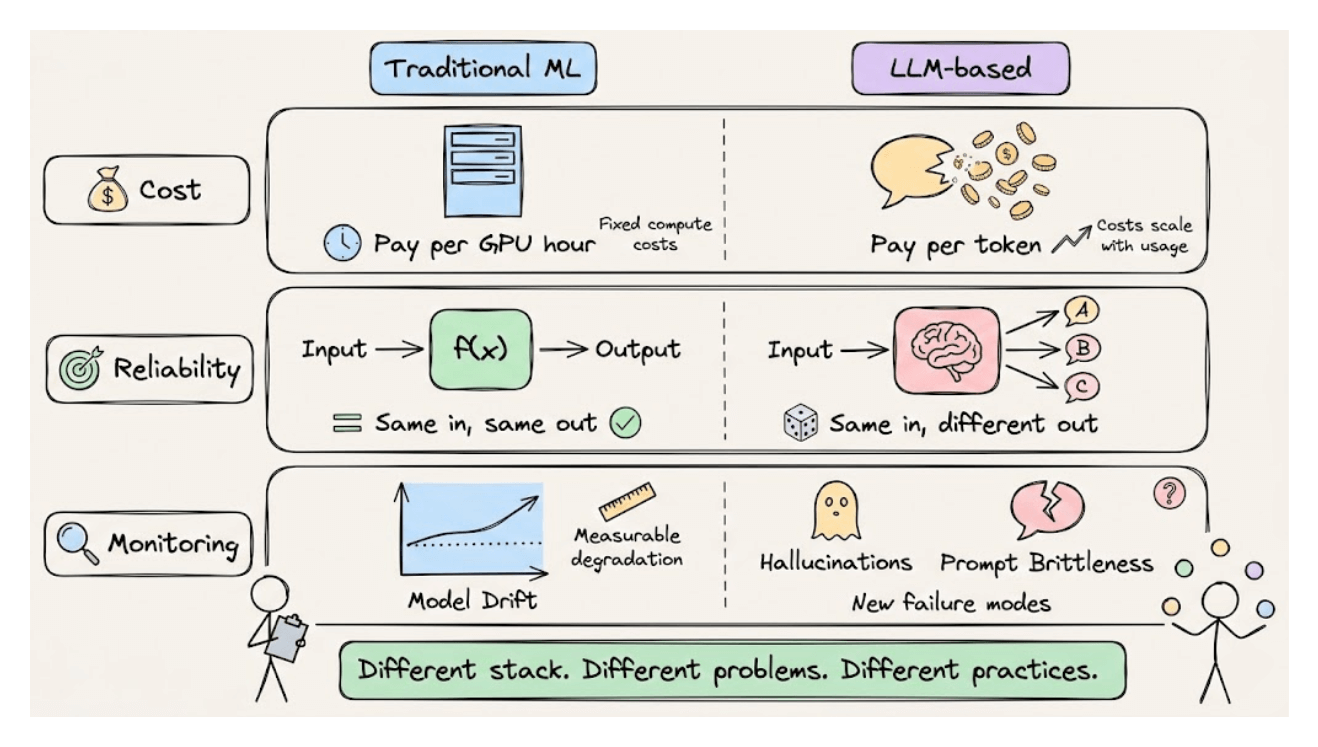

Cost structures change because you’re paying per token, not per GPU hour.

Reliability means something different when the same input can produce varying outputs. Monitoring shifts from tracking model drift to detecting hallucinations and prompt brittleness.

LLMOps is the discipline that addresses these new realities. It builds on the foundations of MLOps while extending them for systems where foundation models are the core building blocks.

This course develops that discipline systematically, giving you both the conceptual frameworks and practical implementations to build LLM applications that perform reliably in production settings.

Just like the MLOps course, each chapter will clearly explain necessary concepts, provide examples, diagrams, and implementations.

As we progress, we will see how we can develop the critical thinking required for taking our applications to the next stage and what exactly the framework should be for that.

👉 Over to you: What would you like to learn in the LLMOps course?

Thanks for reading!