A Visual and Intuitive Explanation to Momentum in Machine Learning

Speedup machine learning model training with little effort.

Training time is paramount in training machine learning models, especially large models.

As you progress towards building large models, every bit of possible optimization is crucial.

And, of course, there are various ways to speed up model training.

For instance, you may use:

Batch processing

Distributed training

Use better Hyperparameter Optimization, like Bayesian Optimization, which we discussed here: Bayesian Optimization for Hyperparameter Tuning.

and many other techniques.

Momentum is another such reliable and effective technique.

While Momentum is quite popular, I have often seen many struggling to intuitively understand:

How it works?

Why is it effective?

Let’s understand this today!

In gradient descent, every parameter update solely depends on the current gradient. This is depicted below:

But momentum-based optimization also considers a moving average of past gradients.

Let’s understand more visually why this makes sense.

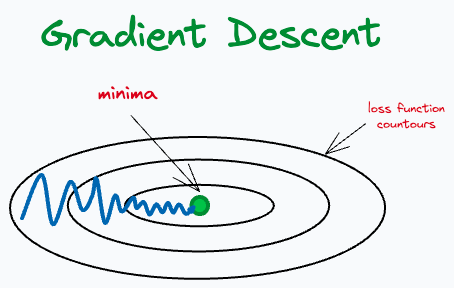

Imagine the cost function has contours as shown below:

The green point denotes the minima of the loss function we are aiming for.

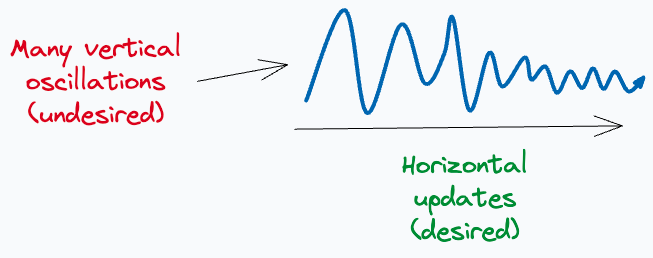

With gradient descent, we would expect oscillating updates as shown below, and eventually, it may converge to the desired minima.

Here, the parameter updates in the vertical direction appear unnecessary, don’t they?

For faster convergence, ideally, we would expect gradient updates to take place solely in the horizontal direction.

But gradient descent possesses many unnecessary oscillations in the vertical direction.

Momentum allows us to avoid these vertical oscillations.

How?

We discussed above that Momentum considers a moving average of past gradients.

From the image above, it is evident that the moving average of the past parameter updates in the vertical direction is small.

But that in the horizontal direction is large, which is precisely what we want.

By considering a moving average of parameter updates, we can:

Smooth out the optimization trajectory.

Reduce unnecessary oscillations in parameter updates.

This is the core idea behind Momentum.

Its efficacy is evident from the image below:

As depicted above:

The model converges faster with Momentum.

The training loss is high without Momentum.

The test accuracy is low without Momentum.

Of course, Momentum does introduce another hyperparameter (Momentum rate) in the model, which should be tuned appropriately:

For instance, considering the 2D contours we discussed above:

Setting an extremely large value of Momentum rate will significantly expedite gradient update in the horizontal direction. This may lead to overshooting the minima.

What’s more, setting an extremely small value of Momentum will slow down the optimal gradient update, defeating the whole purpose of Momentum.

👉 Over to you: What are some other reliable ways to speed up machine learning model training? Let me know :)

👉 If you liked this post, don’t forget to leave a like ❤️. It helps more people discover this newsletter on Substack and tells me that you appreciate reading these daily insights. The button is located towards the bottom of this email.

Thanks for reading!

Latest full articles

If you’re not a full subscriber, here’s what you missed last month:

Formulating and Implementing the t-SNE Algorithm From Scratch.

Generalized Linear Models (GLMs): The Supercharged Linear Regression.

Gaussian Mixture Models (GMMs): The Flexible Twin of KMeans.

Where Did The Assumptions of Linear Regression Originate From?

To receive all full articles and support the Daily Dose of Data Science, consider subscribing:

👉 Tell the world what makes this newsletter special for you by leaving a review here :)

👉 If you love reading this newsletter, feel free to share it with friends!