An Intuitive Guide to Generative and Discriminative Models in Machine Learning

A popular interview question.

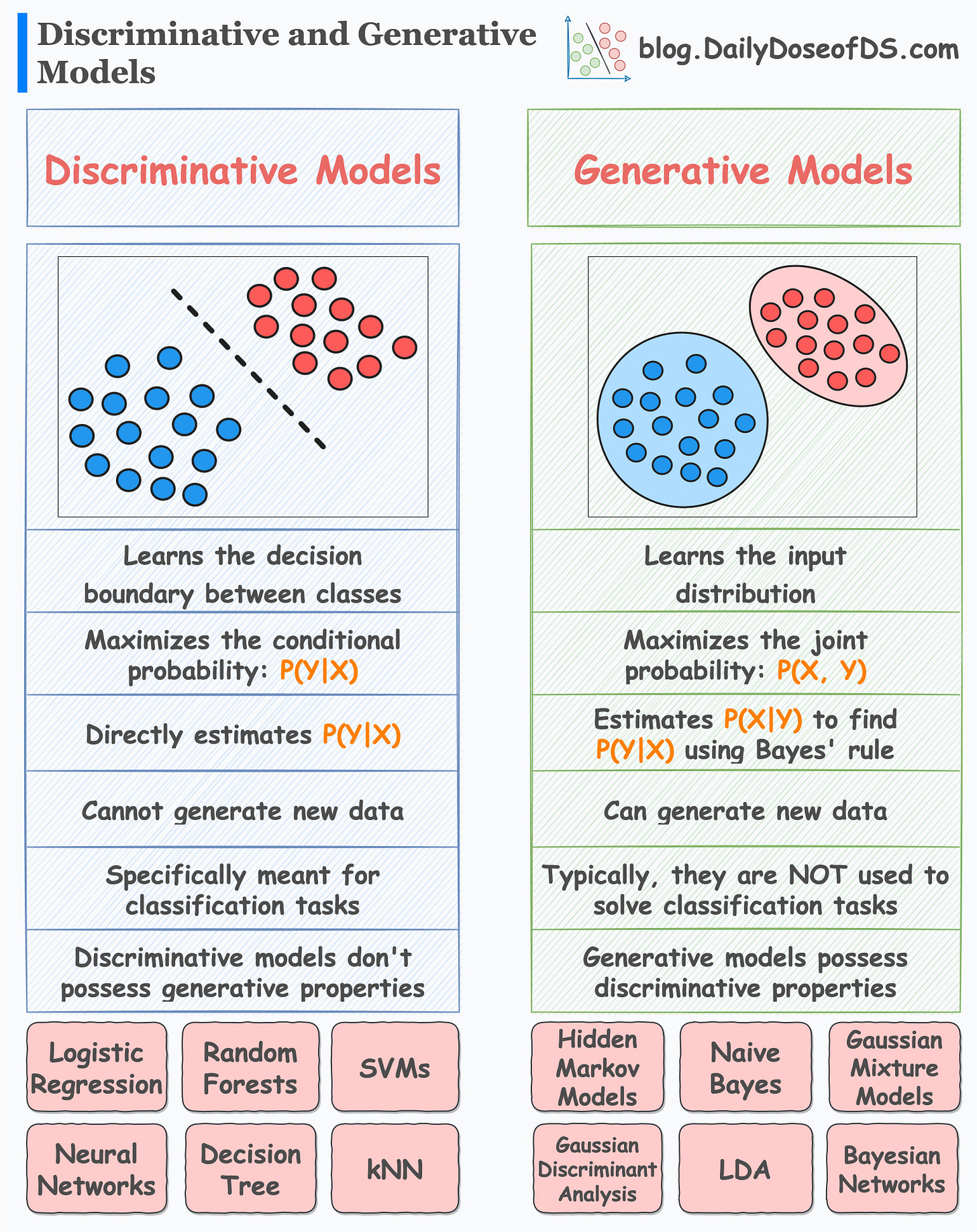

Many machine learning models can be classified into two categories:

Generative

Discriminative

This is depicted in the image above.

Today, let’s understand what they are.

Discriminative models

Discriminative models:

learn decision boundaries that separate different classes.

maximize the conditional probability: P(Y|X) — Given an input X, maximize the probability of label Y.

are meant explicitly for classification tasks.

Examples include:

Logistic regression

Random Forest

Neural Networks

Decision Trees, etc.

Generative models

Generative models:

maximize the joint probability: P(X, Y)

learn the class-conditional distribution P(X|Y)

are typically not meant for classification tasks.

Examples include:

Naive Bayes

Linear Discriminant Analysis (LDA)

Gaussian Mixture Models, etc.

We covered Joint and Conditional probability before. Read this post if you wish to learn what they are: A Visual Guide to Joint, Marginal and Conditional Probabilities.

As generative models learn the underlying distribution, they can generate new samples.

However, this is not possible with discriminative models.

Furthermore, generative models possess discriminative properties, i.e., they can be used for classification tasks (if needed).

However, discriminative models do not possess generative properties.

Let’s consider an example.

Imagine yourself as a language classification system.

There are two ways you can classify languages.

Learn every language and then classify a new language based on acquired knowledge.

Understand some distinctive patterns in each language without truly learning the language. Once done, classify a new language.

Can you figure out which of the above is generative and which one is discriminative?

The first approach is generative. This is because you have learned the underlying distribution of each language.

In other words, you learned the joint distribution P(Words, Language).

Moreover, as you understand the underlying distribution, now you can generate new sentences, can’t you?

The second approach is a discriminative approach. This is because you only learned specific distinctive patterns of each language.

It is like:

If so and so words appear, it is likely “Langauge A.”

If this specific set of words appear, it is likely “Langauge B.”

and so on.

In other words, you learned the conditional distribution P(Language|Words).

Here, can you generate new sentences now? No, right?

This is the difference between generative and discriminative models.

Also, the above description might persuade you that generative models are more generally useful, but it is not true.

This is because generative models have their own modeling complications.

For instance, typically, generative models require more data than discriminative models.

Relate it to the language classification example again.

Imagine the amount of data you would need to learn all languages (generative approach) vs. the amount of data you would need to understand some distinctive patterns (discriminative approach).

Typically, discriminative models outperform generative models in classification tasks.

👉 Over to you: What are some other problems while training generative models?

👉 If you liked this post, don’t forget to leave a like ❤️. It helps more people discover this newsletter on Substack and tells me that you appreciate reading these daily insights. The button is located towards the bottom of this email.

Thanks for reading!

Whenever you’re ready, here are a couple of more ways I can help you:

Get the full experience of the Daily Dose of Data Science. Every week, receive two curiosity-driven deep dives that:

Make you fundamentally strong at data science and statistics.

Help you approach data science problems with intuition.

Teach you concepts that are highly overlooked or misinterpreted.

Promote to over 28,000 subscribers by sponsoring this newsletter.

👉 Tell the world what makes this newsletter special for you by leaving a review here :)

👉 If you love reading this newsletter, feel free to share it with friends!