Bellman Equations and Dynamic Programming in RL

The full RL nanodegree, covered with implementation.

Recently, we launched a hands-on course series on reinforcement learning.

Part 3 is now available, and you can read it here →

Part 2 covered the MDP framework and value functions. This one teaches you the equations and algorithms that actually compute them.

It covers:

The Bellman expectation equations

the Bellman optimality equations

and the dynamic programming methods built on top of them, like iterative policy evaluation, policy improvement, policy iteration, and value iteration, with hands-on implementations.

Everything is covered from scratch, so no RL background is required.

You can read Part 3 of the course here →

Why care?

Most ML practitioners today have deep intuition for supervised learning.

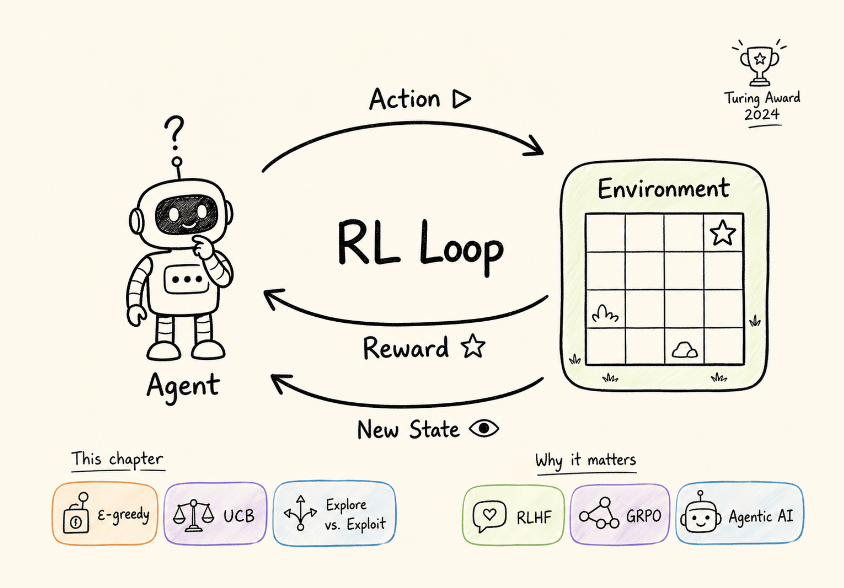

But RL operates on a fundamentally different set of ideas. There is no labeled dataset. The agent generates its own training data through interaction. Actions have delayed consequences. Exploration is not optional but rather a core part of the learning process.

This is also where the biggest breakthroughs in AI are compounding right now. The field is having its moment, and the demand for engineers who understand it deeply is growing fast.

Every technique that made recent LLMs dramatically better (RLHF, GRPO, DPO, constitutional AI) is a direct application of RL.

Every agentic system that takes multi-step actions, calls tools, and operates over long horizons is an RL problem.

When you read about these in a paper or blog post, they only land if you understand what a policy is, what a value function measures, why reward shaping is hard, and how exploration works.

This series builds that understanding from the ground up, concept by concept, with math where it matters and hands-on code you can run.

This series is structured the same way as our MLOps/LLMOps course: concept by concept, with clear explanations, diagrams, math where it matters, and hands-on implementations you can run.

And no prior RL background is needed.

Over to you: What topics would you like us to cover in this RL series?

Thanks for reading!