[Hands-on] Build OpenClaw’s Core In a Single Visual Workflow

...using 100% open-source stack!

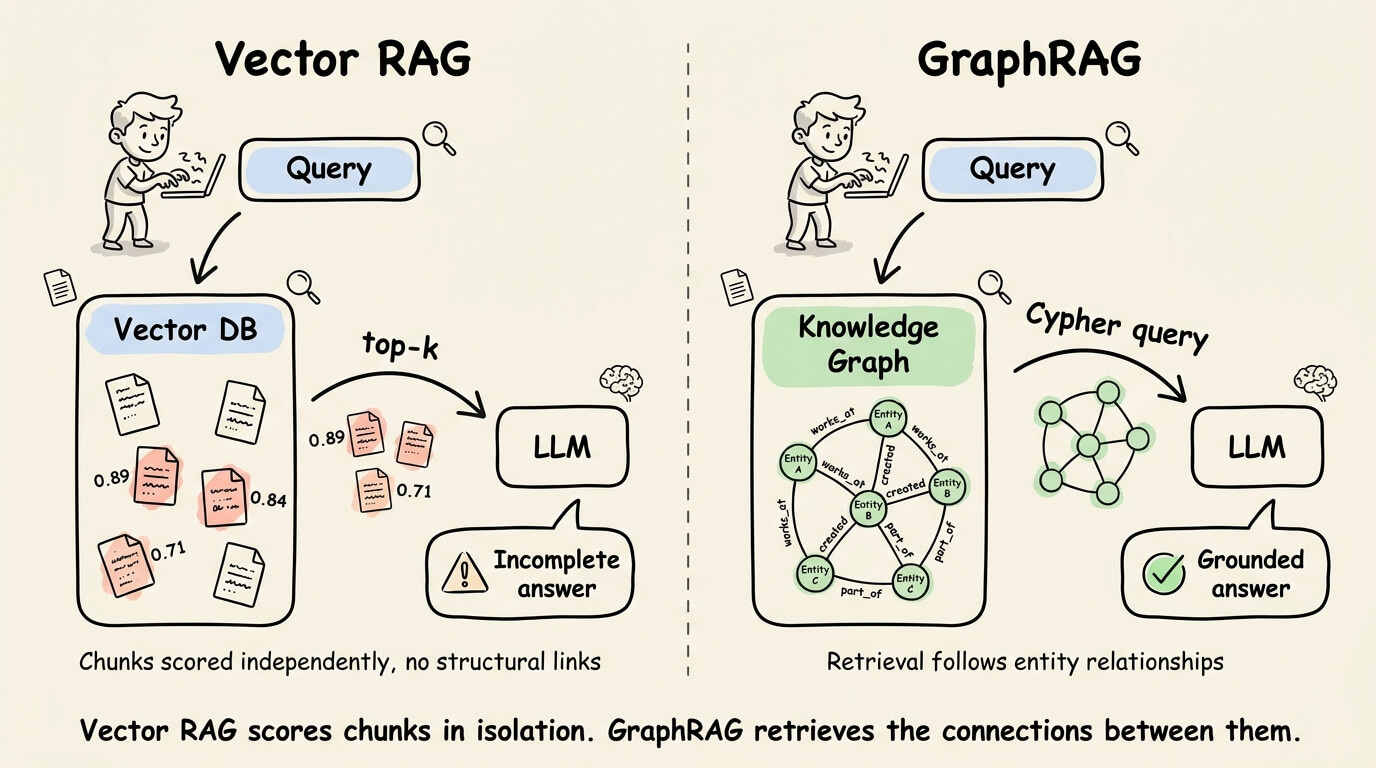

A graph-first alternative to vector RAG

Vector RAG scores chunks independently against a query. That works for single-hop fact lookups, but breaks when a query requires connecting info across multiple chunks because there’s no structural link between what gets retrieved.

FalkorDB’s GraphRAG SDK builds a knowledge graph from your source data (PDFs, CSVs, HTML, URLs), auto-detects an ontology using an LLM, and converts natural language into Cypher graph queries at query time.

Retrieval follows entity relationships instead of embedding distance, so the LLM gets a structurally connected context rather than isolated fragments.

On GraphRAG-Bench (ICLR’26), the solution ranked #1 overall across all four task types (fact retrieval, complex reasoning, contextual summarization, creative generation) against 8 systems, including Microsoft GraphRAG.

It’s LLM-agnostic, supports multi-agent orchestration with domain-specific KG agents, and runs on a single machine.

[Hands-on] Build OpenClaw’s core in a single visual workflow

OpenClaw runs a seven-stage agentic loop with multi-channel routing, persistent memory, and tool execution, all inside a local gateway process.

The orchestration logic is powerful but opaque. It lives inside the runtime, and what you interact with is a JSON config file and CLI commands.

You can still somewhat control what the agent does, but not how the routing and decision-making are wired together.

In the demo below, we rebuilt OpenClaw’s core in a single visual workflow using Sim (open-source with 27k stars) and made that wiring explicit:

Sim is an open-source workflow builder where every routing decision, tool call, and memory read is a visible node on a canvas.

We rebuilt the full OpenClaw pipeline in it using:

25 blocks

29 connections

short-term and long-term memory

and multi-channel output across Telegram and Slack.

So essentially, you have the same capabilities, but the entire orchestration graph is inspectable and editable.

To build this, we also used the Sim Copilot to generate the workflow from natural language. A single prompt produced the entire 25-block workflow, all connections intact.

Moreover, the full stack is open-source, self-hostable, and runs local models through Ollama.

Watch the full walkthrough above in the video.

You can find the Sim GitHub repo here → (don’t forget to star it)

Thanks for reading!