How to Build an OS for Your AI Workforce?

An OS layer to manage a fleet of AI agents!

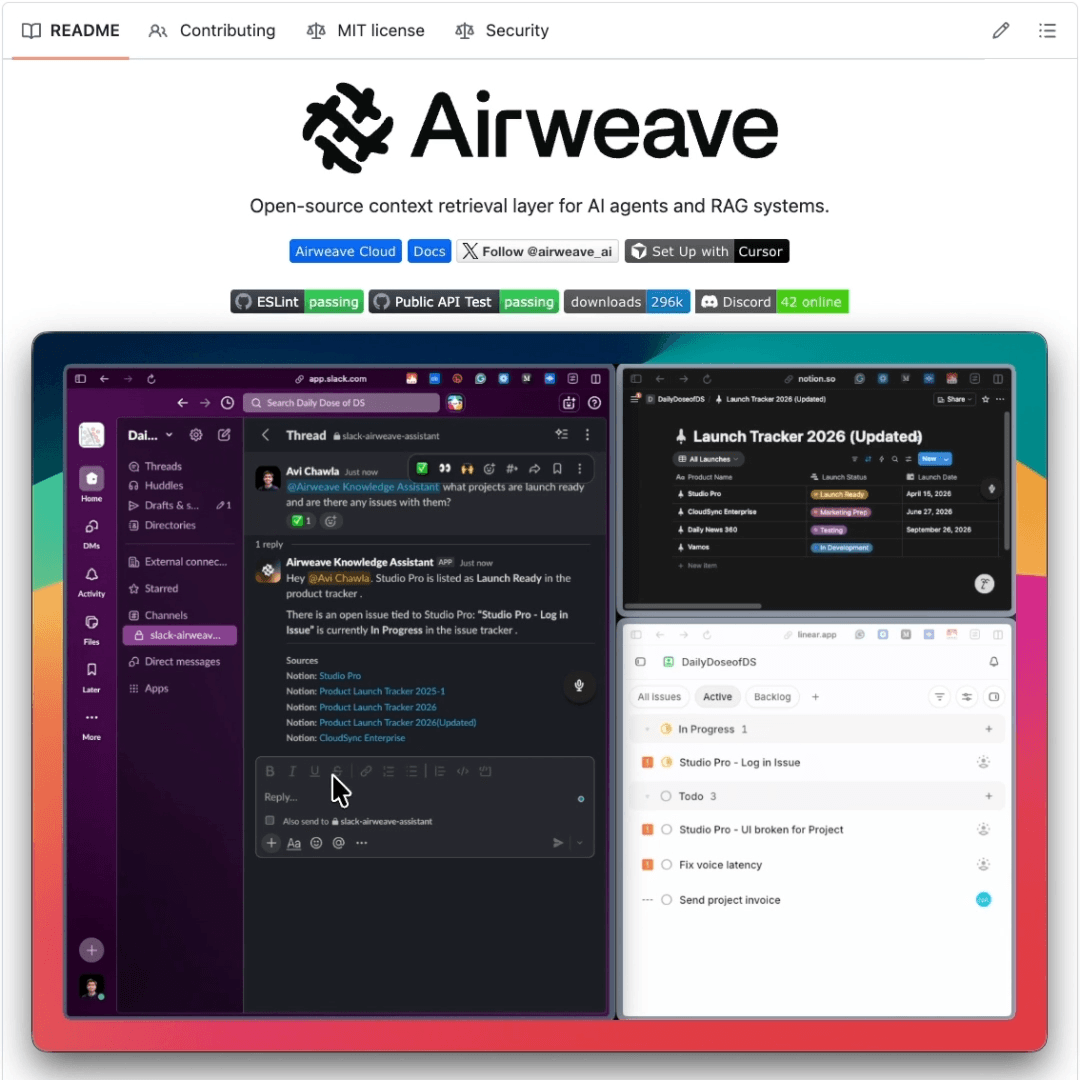

An open-source solution to Enterprise AI search!

We found a self-hosted Slack assistant that answers your questions by searching across all your company’s tools in a single query.

It’s built on top of Airweave, an open-source context retrieval layer that makes all your tools searchable for Agents using semantic, keyword, and agentic search.

Here’s how the app works:

The app watches for questions in Slack.

It searches every connected tool at once using Airweave (Notion, GitHub, Jira, Linear, etc.) to find relevant context.

The Airweave engine ranks the results by relevance and returns references to the original docs.

An LLM generates the final response and sends it back to Slack with citations.

The key problem is that most internal knowledge bots only search one tool and need custom integration + sophisticated retrieval logics for each new source.

Airweave gives you unified search across everything:

Connects to 50+ sources (GitHub, Linear, Slack, databases, and more).

New tools connect in minutes via OAuth or API key.

The index always stays fresh through incremental sync, only processing new or changed data.

All of this runs locally, it is fully open-source and self-hostable via Docker.

How to build an OS for your AI workforce?

We’ve spent two years getting really good at building AI agents.

We have frameworks, workflow builders, drag-and-drop canvases, Python libraries, and multi-agent orchestrators. The tooling has never been more accessible. And yet, most organizations that deploy AI agents in production still treat it like a science project.

Something is missing, and it’s not another framework.

The problem isn’t building agents. It’s running them.

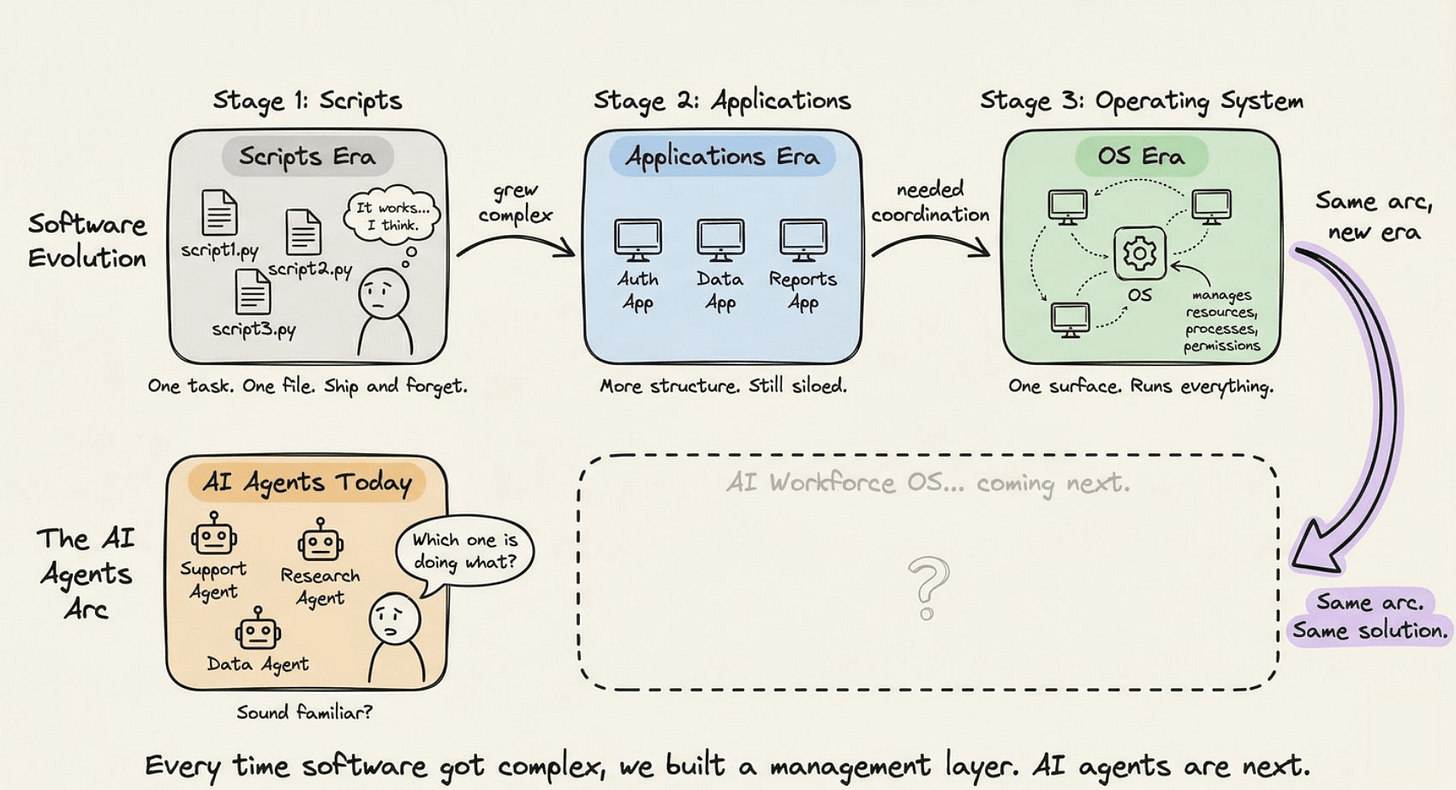

Think about how software development matured.

In the early days, developers wrote scripts. Then they wrote applications. Then systems got complex enough that you needed something to manage all those applications: an operating system. Something that handled resources, coordinated processes, and gave you a unified surface to interact with everything at once.

AI agents are following the exact same arc.

Right now, most teams are in the “writing scripts” phase. You build an agent. It does one thing well. You ship it. Then you build another. And another. Before long, you have a dozen agents doing a dozen different things, none of which know about each other, and no single place to manage all of them.

That’s not a workforce. That’s a collection of scripts with nothing coordinating them.

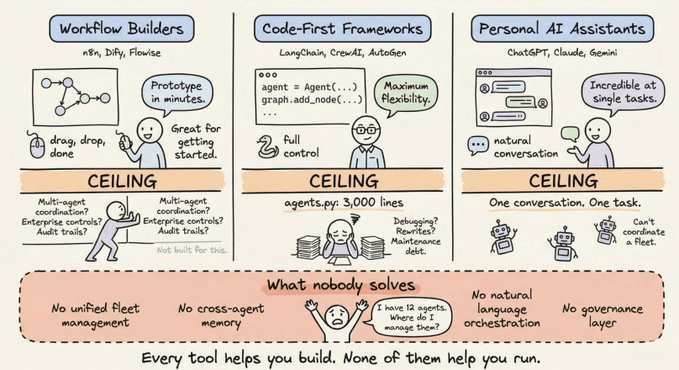

What the current landscape actually gives you

Let’s look at what’s available today, honestly.

Agent workflow builders (tools like n8n, Dify, Flowise) are great for prototyping. You drag nodes onto a canvas, wire them together, and you have something that looks like an agent workflow. The problem is they hit a ceiling fast. Complex multi-agent coordination, dynamic task assignment, enterprise access controls, audit trails? Most of these tools weren’t built for that.

Code-first frameworks (LangChain, CrewAI, AutoGen) give you power, but at a steep cost. You’re writing graph definitions in Python, configuring role-based agent patterns, managing state manually. Experienced developers will tell you: the moment your agents.py file crosses a few hundred lines, the abstraction starts working against you. Debugging is painful and rewrites become a recurring reality.

Personal AI assistants (OpenAI’s agents, Claude, Gemini in assistant mode) are remarkable at individual tasks. Ask them to research a topic, draft a document, or run a single workflow. They’re designed to respond to you, one conversation at a time. But they weren’t designed to coordinate a team of specialized agents working in parallel on a shared goal.

Here’s the pattern across all of these:

They help you build or interact with one agent at a time

They have no unified way to manage a fleet of agents

They can’t assign new work to existing deployed agents through natural language

They have no shared memory, shared state, or shared governance layer

In other words, they solve the construction problem. Nobody has solved the operations problem.

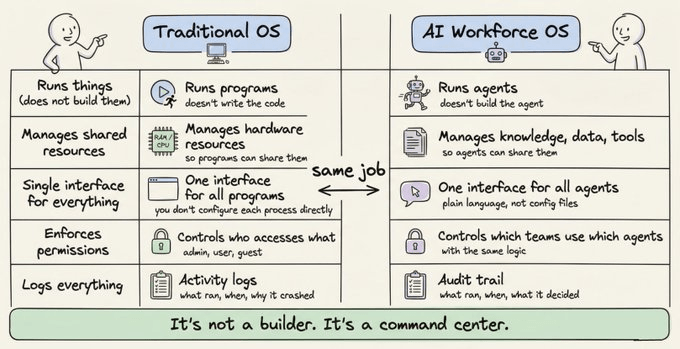

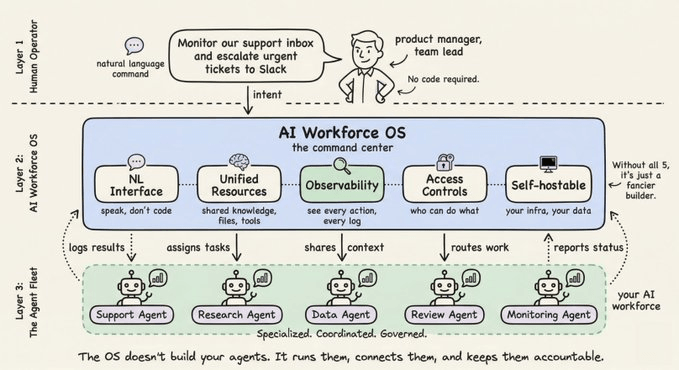

What an operating system for AI actually means

Let’s go back to first principles.

An operating system doesn’t build programs. It runs them and manages resources across programs. It gives you a single interface to see and control everything happening across your machine. It enforces permissions, logs activity, and handles failures gracefully.

An OS for AI agents would do the same thing, but for your workforce.

It would give you one place to:

Create, modify, and deploy agents without writing a single line of code

Direct your entire agent fleet through natural language

Assign tasks to specialized agents and monitor their progress

Connect agents to shared knowledge, shared data, and shared tools

Set permissions so different teams can only access relevant agents

See logs, audit what ran, and know exactly what each agent did

The key insight is this: an AI workforce OS is not a builder. It’s a command center.

The builder is still important. Agents need to be designed well. Workflows need to be structured. But once they’re running, you need a layer above them that lets you operate the whole system as a coherent unit.

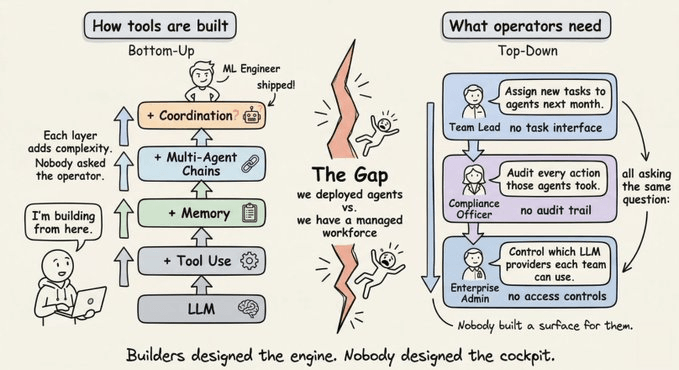

Why this gap exists in the first place

Most tooling in the AI agent space was designed from the bottom up.

Start with an LLM. Add tool use. Chain multiple LLM calls. Add memory. Coordinate multiple agents. Each step added complexity to an already-complex foundation.

Nobody stopped to ask: what does the person operating this system actually need?

A developer building an agent workflow doesn’t think about the team lead who needs to assign new tasks to that agent next month. An ML engineer designing a multi-agent pipeline doesn’t think about the compliance officer who needs to audit every action those agents took. A product manager deploying an AI research assistant doesn’t think about the enterprise admin who needs to control which LLM providers each department can use.

The result is a massive gap between “we deployed agents” and “we have a managed AI workforce.”

Most organizations fall into this gap and never climb out.

The architecture this new layer needs

If you were to design an OS for your AI workforce from scratch, it would need a few things.

A natural language command interface. Not a visual canvas you drag things around on. Not a Python SDK you write against. A conversational layer where you can say “create a workflow that monitors our support inbox and escalates urgent tickets to Slack” and have it happen. This is how people actually want to interact with their AI workforce.

Unified resource management. Every agent should share access to the same knowledge bases, file stores, databases, and integration credentials. Not siloed per-agent, but managed at the workspace level. When you build a new agent, it should be able to see and use what everything else already has access to.

Execution observability. You need to see, in one place, what every agent is doing, what it has done, and why it made the decisions it did. Not buried in individual logs across different services. A single, structured audit trail.

Enterprise-grade access controls. Different teams should be able to use different agents without stepping on each other. Admins should be able to restrict which models or tools any agent can use. Sensitive data should stay gated.

Self-hostability. For any serious enterprise deployment, you can’t send your data to a third-party SaaS. The OS needs to run in your own infrastructure.

These aren’t “nice to haves.” Without all of them, you don’t have an operating system. You have a slightly fancier builder.

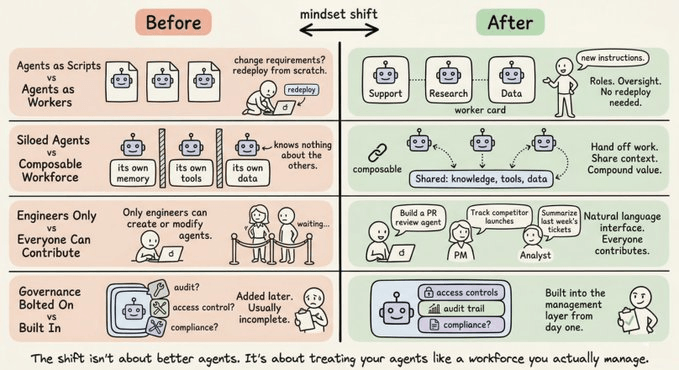

What this changes for teams building with AI today

This philosophy reframes how you think about agents entirely.

Instead of asking “how do I build this agent,” you start asking “what does my AI workforce look like six months from now, and how do I manage it?”

That shift matters because:

Agents become workers, not scripts. They have roles, responsibilities, and oversight. You don’t redeploy them from scratch when requirements change. You give them new instructions.

Your workforce is composable. A customer support agent, a research agent, and a data enrichment agent can share knowledge and hand off work to each other, because they’re all managed by the same layer.

Non-technical stakeholders can participate. When the interface is natural language, you don’t need an engineer to create a new agent or assign a new task. The product manager, the operations lead, the analyst, they can all contribute.

Enterprise governance becomes tractable. Audit trails, access controls, and compliance aren’t bolted on afterward. They’re built into the management layer from the start.

This is what makes AI agents viable at scale. Not better models. Not more integrations. A coherent management layer that treats the entire fleet as a single, operable system.

Where things are heading

The bottleneck in AI adoption isn’t model capability anymore. It’s infrastructure maturity.

Organizations know what they want agents to do. They don’t know how to deploy, manage, and govern them at scale. The teams that figure out the operations side first will have a structural advantage that compounds over time.

The companies building toward this future aren’t starting with “how do we make better agents.” They’re starting with “what does the command center for our AI workforce look like.”

That is the right question.

If you want to see this philosophy already being built, Sim is doing exactly this. Their platform started as an open-source visual workflow builder and has evolved into what they call “the central intelligence layer for your AI workforce.” The latest version ships Mothership, a natural language command center that lets you create, manage, and direct your entire agent fleet from a single interface.

The fact that it’s open-source (27k+ GitHub stars, Apache 2.0) matters. You can self-host it, audit the code, and trust that you’re not locked in. For builders who want to try the concept without building it from scratch, it’s a solid starting point.

Thanks for reading!

Great work 👏 thanks for sharing in details 👍