How Top AI Labs Are Building RL Agents in 2026

The era of not writing custom reward functions.

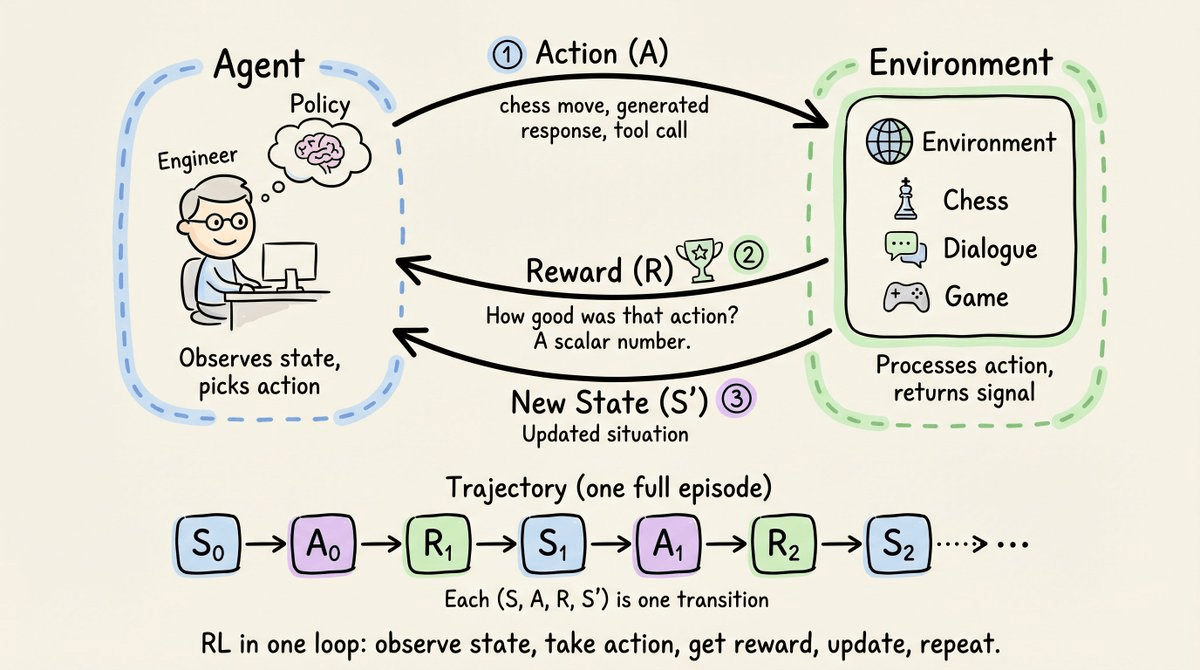

Reinforcement learning, at its core, is straightforward: a system takes an action, the environment rewards it, and the agent updates its behavior to maximize that reward over time.

The interaction above works in discrete steps. At each step, three things happen in order:

The agent observes the current state of the environment (S). A state is a description of the situation the agent is in, enough to decide what to do next. For instance, in chess, the state is the board position, and in a dialogue model, the state is the conversation history so far.

The agent picks an action (A) based on what it sees. The action is the agent’s output, the only way it can influence the environment. For instance, in chess, an action is a legal move. For an LLM, an action is the generated response.

The environment then does two things: it transitions to a new state (S’), and it emits a reward (R), a scalar number that evaluates the action. The next step begins, and the loop continues.

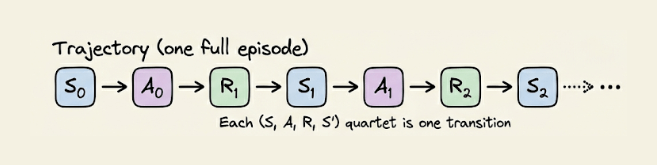

Stringing these steps together gives a trajectory:

Reading left to right, this is the entire history of the agent’s interaction with the environment. Each (S, A, R, S’) quartet is one transition, and much of RL is about learning from these transitions.

Applying RL to LLMs

When RL was first applied to LLMs, the environment was human preference.

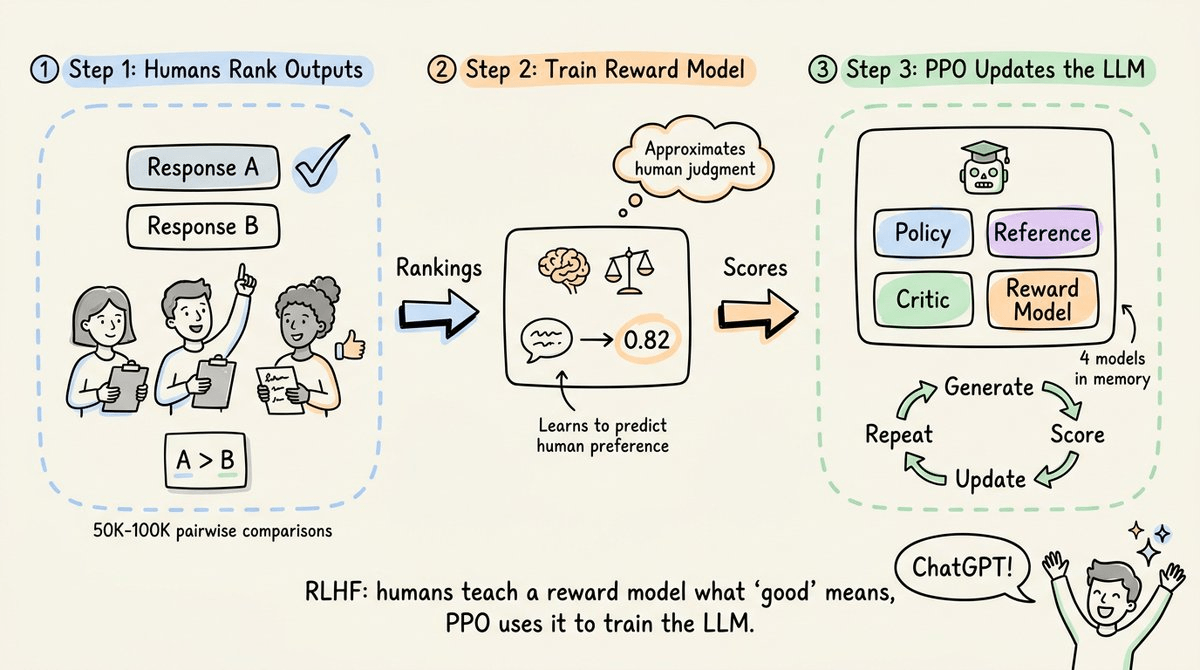

OpenAI’s InstructGPT (2022) introduced RLHF (Reinforcement Learning from Human Feedback), where:

humans ranked model outputs

those rankings trained a reward model

and PPO (Proximal Policy Optimization) used that reward model to fine-tune the LLM.

ChatGPT was built on this exact pipeline.

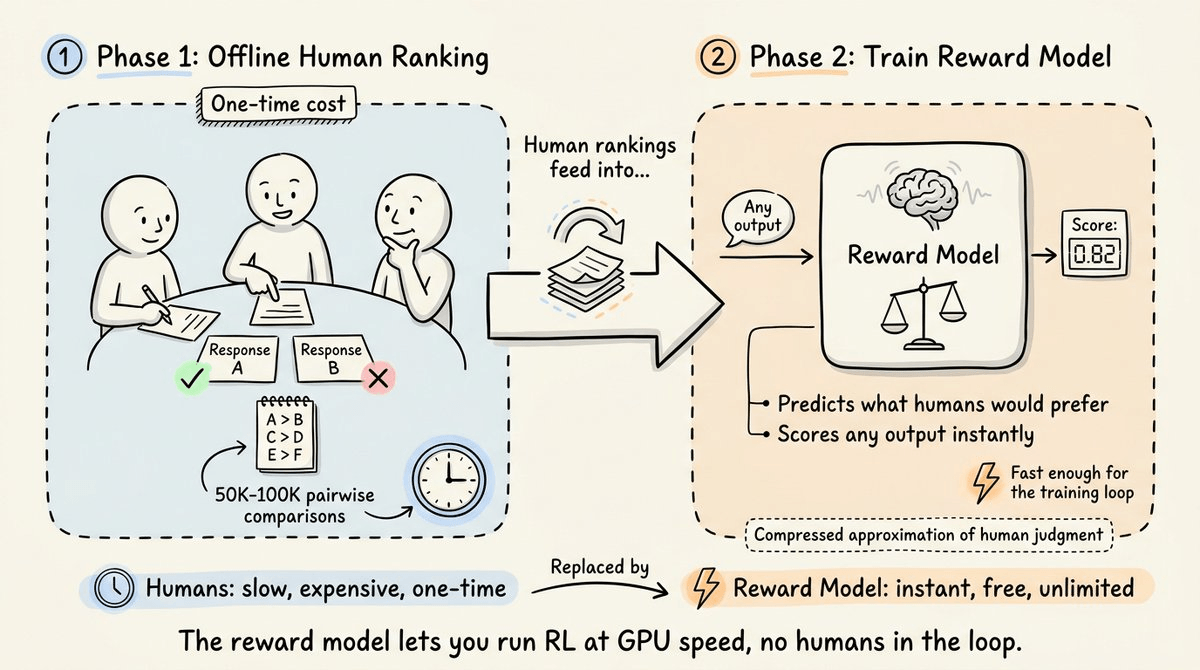

But humans can’t sit in the training loop rating every output in real time. If the model generates 16 responses per prompt across thousands of training steps, that’s hundreds of thousands of evaluations.

OpenAI solved this by splitting the process into two phases.

First, the offline phase. Here, humans ranked a relatively small set of model outputs and generated pairwise comparisons. This was the expensive human labor part, but it was a one-time cost.

Second, they trained a reward model on those rankings, which was a separate LLM that learned to predict what humans would prefer. Now you had a neural network that could score any output instantly, without waiting for a human. The reward model was a compressed approximation of human judgment, fast enough to sit inside the training loop.

With the reward model in place, PPO could run the actual RL training at GPU speed. The model generated responses, the reward model scored them, and PPO updated the weights, without extensive need for humans.

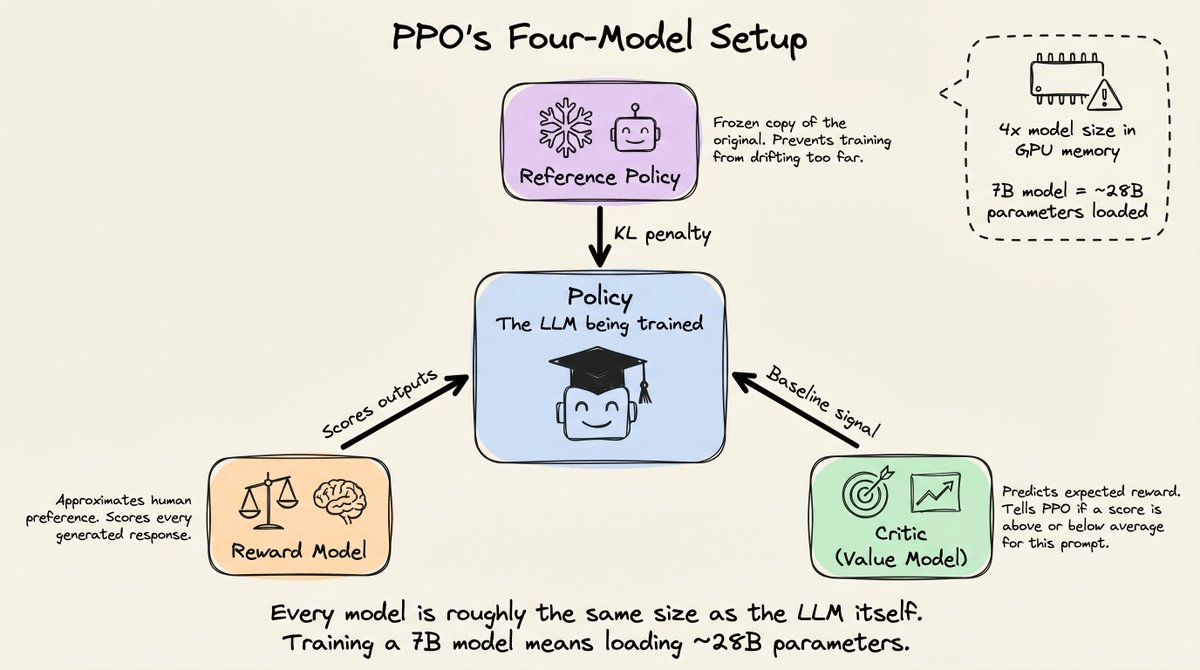

The cost, however, was that PPO required four full-size models in memory simultaneously.

The policy (the LLM being trained).

The reference policy (a frozen copy of the original, used to prevent training from drifting too far via a KL divergence penalty).

The reward model (the human-preference approximator discussed above to score every output).

And the critic, also called the value model (more about it below).

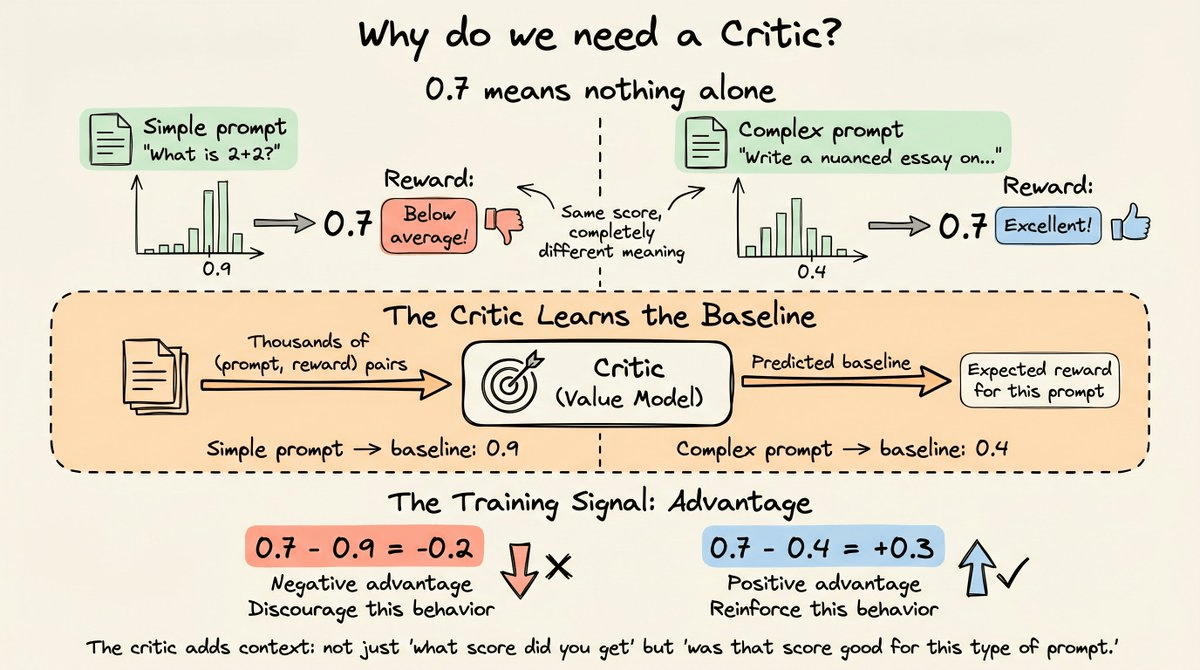

The critic exists to answer one question:

Was this reward good or bad relative to what we’d normally expect for this prompt?

We need this because a raw reward of 0.7 means nothing in isolation. For instance, on a simple factual question where most responses score 0.9, a 0.7 is below average.

But on a complex open-ended question where most responses score 0.4, a 0.7 is excellent.

The critic learns this baseline by observing thousands of (prompt, reward) pairs during training.

PPO’s actual training signal is the advantage, which is estimated as the reward minus the critic’s predicted baseline.

This makes the signal stable across prompts of different difficulty. But the cost involved here is that the critic is a full-size LLM itself, adding another model’s worth of memory.

For a 7B parameter LLM, that meant roughly 28B parameters in memory at once.

DeepSeek R1 breakthrough using verifiable rewards

In January 2025, DeepSeek released R1 with a fundamentally different approach to the reward signal.

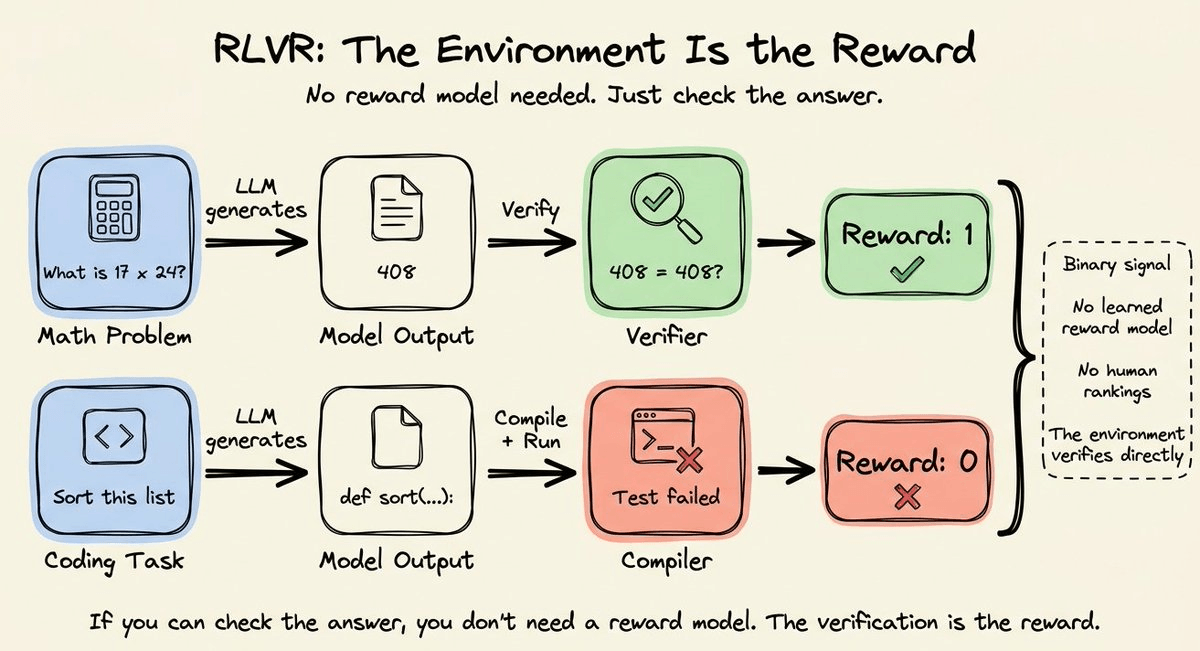

Instead of training a reward model from human preferences (Phases 1 and 2 of the RLHF pipeline), they used RLVR (Reinforcement Learning with Verifiable Rewards).

It’s a simple, rule-based verification where the environment itself provides the signal.

For instance:

For math problems, the verifier checked if the model’s answer matched the known solution.

For code, a compiler ran the output and returned pass or fail. Binary rewards: 1 for correct, 0 for wrong.

There are no human rankings or explicit reward models required since the ground truth was available (or inferable) to be used as the reward.

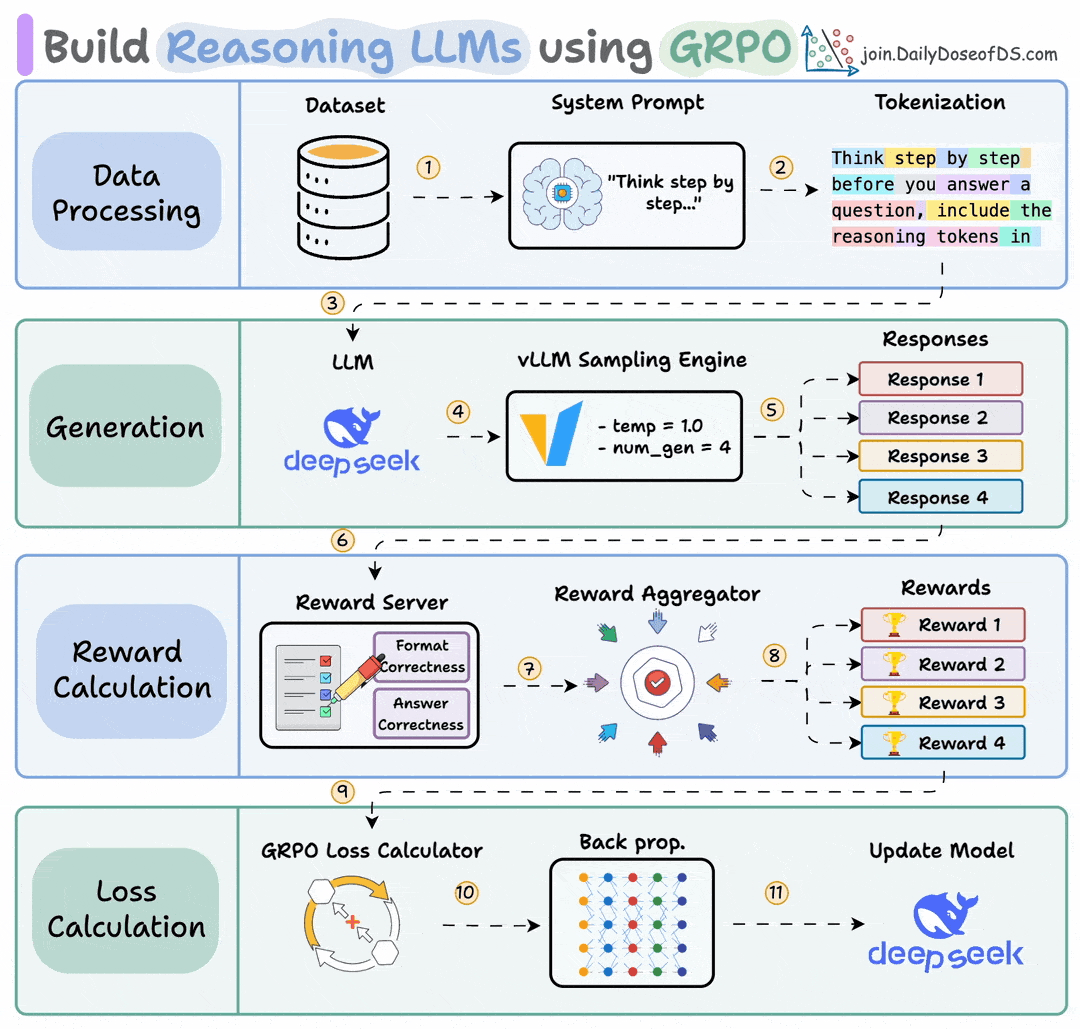

The RL optimizer was GRPO (Group Relative Policy Optimization), which stripped away most of PPO’s infrastructure.

It removed the critic model entirely.

Instead of training a separate model to predict expected reward per prompt, GRPO generated multiple responses to the same prompt (typically 16) and normalized rewards within each group.

If 4 out of 16 responses got the math problem right, those 4 received a positive advantage, and the other 12 received a negative advantage.

This step cut an entire full-size model from memory.

GRPO also removed the need for the learned reward model, since RLVR’s verifier handled scoring directly.

So the four-model PPO setup (policy + reference + critic + reward model) collapsed to just two, i.e., the policy being trained and a reference copy for KL regularization.

In fact, in practice, some implementations fold the reference into the policy checkpoint, bringing it close to a single-model setup.

With this setup. DeepSeek R1-Zero, trained with just GRPO and verifiable rewards (no supervised fine-tuning at all), went from 15.6% to 77.9% on AIME 2024 math problems.

With majority voting, it hit 86.7%, matching OpenAI’s o1.

The model developed self-verification, reflection, and chain-of-thought reasoning on its own, purely from the binary correct/incorrect signal, and nobody taught it to reason step by step.

The RL training loop discovered that reasoning improved the reward, so the model learned to reason.

RLVR with GRPO became the dominant approach for training reasoning models through 2025.

Every major lab released a reasoning variant following this recipe.

The problem

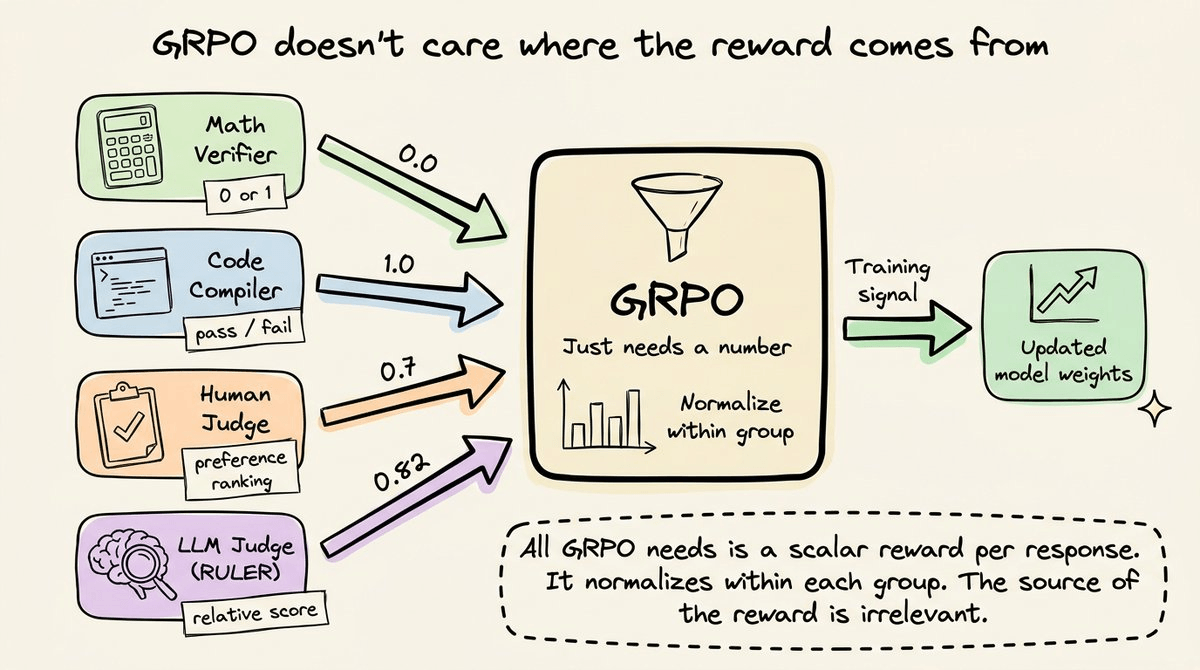

GRPO itself is general-purpose.

It doesn’t care whether the reward comes from a math verifier, a code compiler, a human, or a Python script.

It just needs a number for each response, and it normalizes within each group to produce the training signal.

But a clear bottleneck here is where these reward comes from.

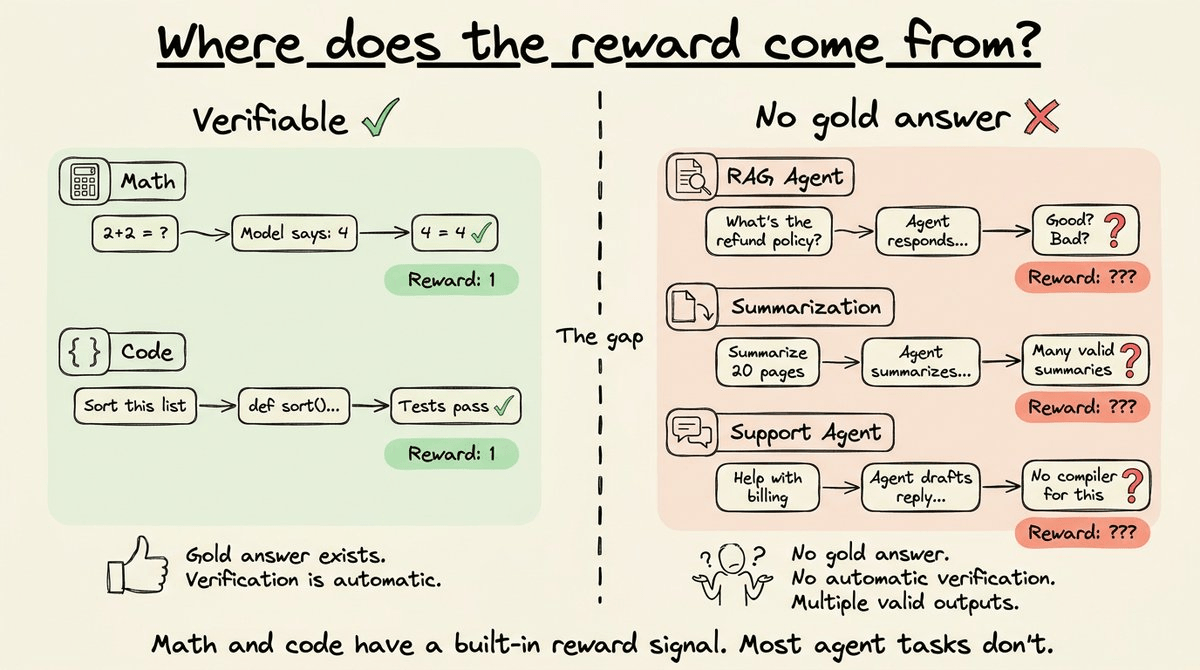

For math and code, this is fine since the environment provides a deterministic signal.

But agents that interact with real-world tools and data don’t produce outputs you can string-match against a gold answer.

A RAG agent retrieves context and generates a response. There’s no single correct answer to compare against. A customer support agent drafts a reply. There’s no compiler to run it through. A summarization agent condenses a 20-page document. There are many valid summaries, and no string-matching verifier can distinguish a good one from a mediocre one.

In these cases, the environment doesn’t hand you a reward signal the way a math problem does.

Of course, some agent tasks do have verifiable outcomes, and for these, RLVR works just fine, even with multi-step tool use. The verifiability depends on the task’s outcome, not on whether the model is acting as an agent.

But for the majority of agent workflows, the outcome is subjective or multi-dimensional.

Intuitively, GRPO is still the right fit here because Agents that take multiple steps, call tools, and compose responses would benefit from learning through exploration, trying different approaches, and getting reinforced for what works.

So, while the RL framework is the right fit, the missing piece is the scoring function.

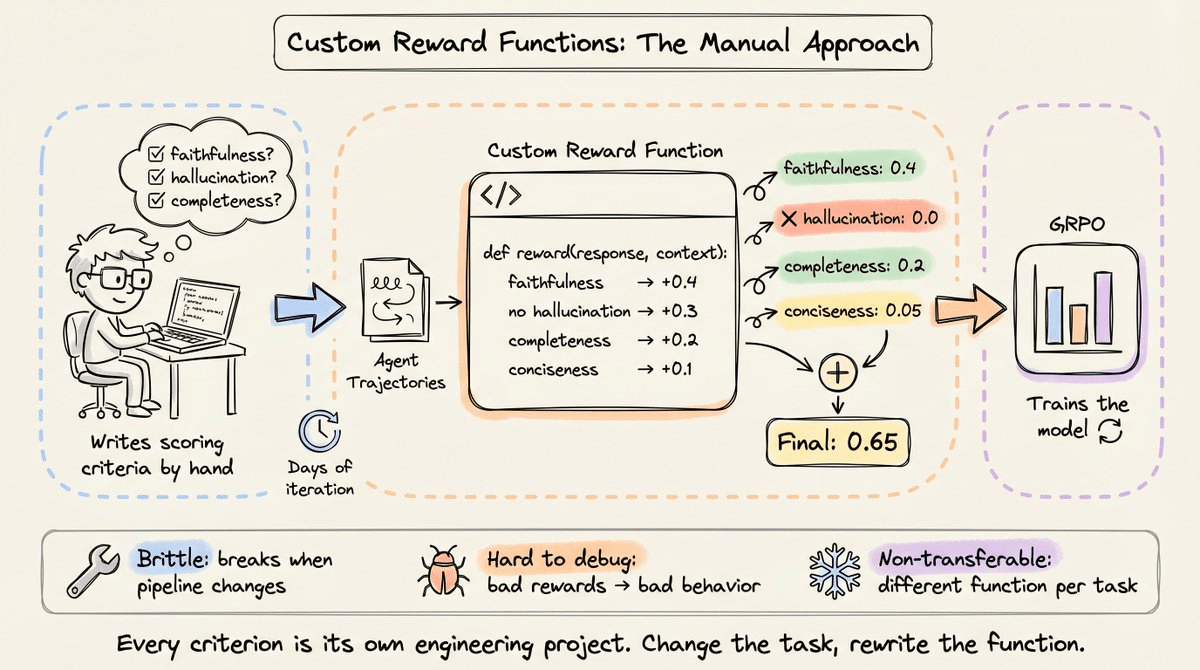

One solution is to write custom reward functions where Python code scores each output based on hand-defined criteria.

A RAG reward function might check whether the response used the retrieved context (faithfulness), penalize content that wasn’t in the context (hallucination), reward completeness, and handle cases where the context itself is ambiguous.

A tool-use reward function might score partial progress through a multi-step task, penalize unnecessary API calls, and measure whether the agent reached the correct final state.

Each criterion returns a partial score, and these get summed or weighted into a final reward.

This works, but it introduces its own set of problems.

Writing a good reward function takes days of iteration. Researchers need to anticipate edge cases, calibrate the weights between different criteria, and test that the function actually rewards the behavior you want.

A reward function that over-weights format compliance and under-weights faithfulness will train an agent that produces beautifully formatted hallucinations.

Reward functions are also brittle. If you change the retrieval pipeline, add a new tool, or modify the system prompt, the reward function needs to be rewritten.

Debugging is problematic too.

When the agent learns bad behavior during training, the cause could be the reward function, the training hyperparameters, the data, or something else entirely.

But because the reward function is custom code, you often can’t tell whether the function is measuring what you think it’s measuring until you’ve already trained a model on it and evaluated the outputs.

This is the primary reason RL has been widely adopted for verifiable tasks (math, code, logic) but not for agent workflows (RAG, customer support, tool use, summarization).

RLVR gave reasoning models a general-purpose, automatic reward signal where they could check the answer and return 0 or 1. No such equivalent exists for most agentic workflows.

The distinction isn’t about the model. The same Qwen 2.5 14B can serve both roles.

The distinction is about the task. Can we verify if an Agent is producing an output that can be automatically checked?

How are AI labs approaching this?

This isn’t a gap that only open-source practitioners are noticing.

The major AI labs have been converging on the same problem from different directions.

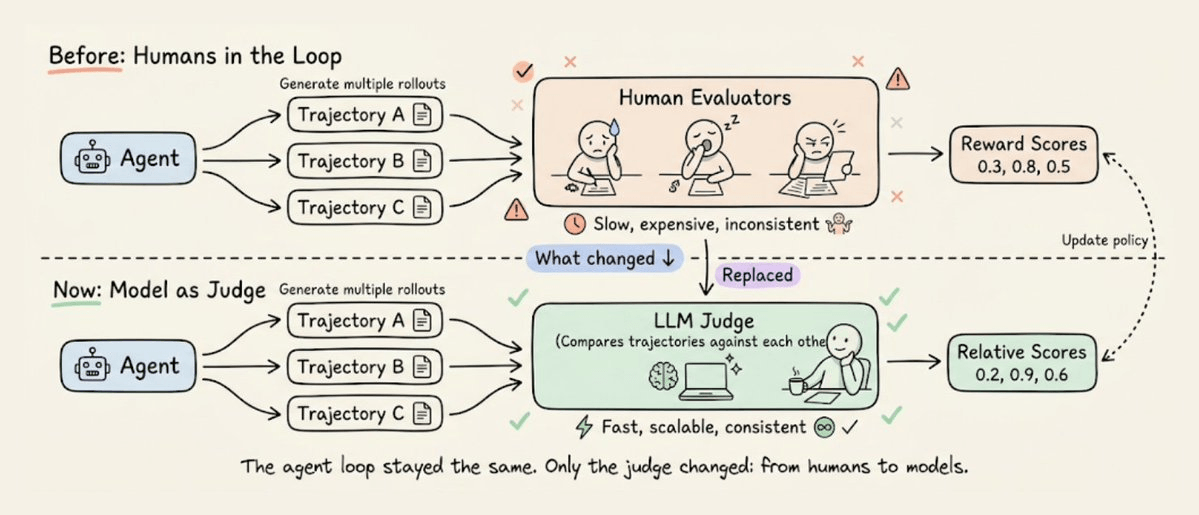

Anthropic demonstrated that you don’t need humans in the RL loop at all.

Their Constitutional AI work showed that if you write down a set of principles (a “constitution”), an AI can evaluate outputs against those principles and generate preference data for RL training.

The AI judged its own outputs against the written principles and used those judgments as the RL signal. This was a significant conceptual shift that a document of rules replaced an army of human evaluators.

OpenAI has been working on something similar internally. They are developing “Universal Verifiers,” a technique to extend RL beyond math and code into domains like biology, medicine, and general knowledge, where answers can’t be checked with a simple string match.

The details aren’t public, but the direction is clear that we need general-purpose reward signals that work across any domain, not just the ones with deterministic verifiers.

RULER

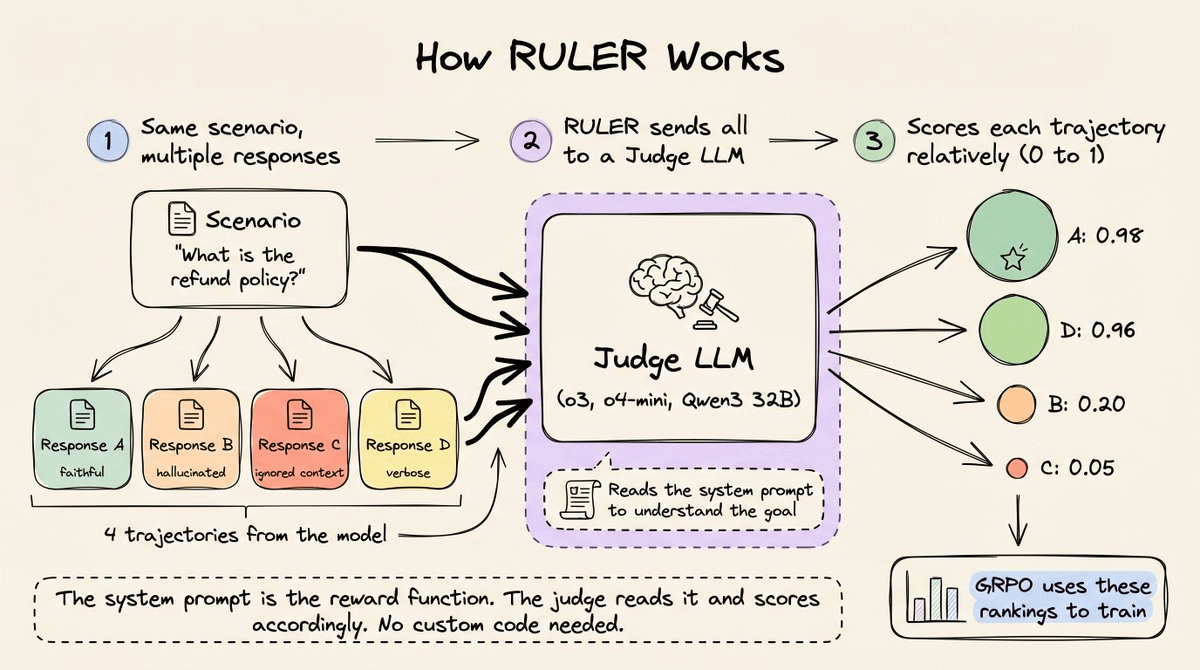

If you want to see this in practice, RULER, built into OpenPipe’s ART framework (open-source with 9k+ stars) is a general-purpose reward function that replaces all of that custom scoring code with a single function call.

It uses an LLM-as-judge to rank multiple trajectories, and it works by exploiting the same property that makes GRPO powerful, i.e., only relative rankings matter.

Here’s how it works step-by-step:

For each training step, you generate N trajectories for the same scenario (typically 4 to 8).

RULER sends all N to a judge LLM (like o3, o4-mini, or even a local Qwen3 32B).

The judge reads the agent’s system prompt to understand what the agent was supposed to do, then scores each trajectory from 0 to 1 relative to the others.

Two properties make this work:

1) Relative scoring is easier than absolute scoring.

LLMs struggle with absolute scoring because there’s no shared calibration.

But asking “which of these 4 responses best follows the system prompt’s instructions” is a comparison task, and LLMs do those consistently well.

RULER leans into this by presenting all trajectories together and asking the judge to rank them against each other.

2) GRPO normalizes within each group anyway.

Whether the best trajectory scored 0.9 or 0.3 in absolute terms doesn’t matter.

GRPO takes the scores within a group, computes the mean and standard deviation, and normalizes.

The training signal comes from the relative ordering by understanding which trajectories were above average and which were below. RULER’s relative rankings map directly onto what GRPO expects.

A rough walkthrough

Before jumping into code, let’s trace what happens conceptually. Say you’re training a RAG agent. At each training step, GRPO generates multiple responses for the same query:

Scenario: "What is the refund policy?"

Retrieved context: "Refunds within 30 days. Digital products non-refundable..."

(Faithful)

Response A: "Refunds within 30 days. Email support@example.com."

(hallucinated)

Response B: "Refunds within 30 days. Also store credit for 90 days."

(ignored context)

Response C: "Not sure, check the website."

(verbose but accurate)

Response D: "The policy states that refunds are available within..."In a traditional setup, you’d write a reward function to score each of these:

def reward_function(response, context):

score = 0.0

if uses_context(response, context):

score += 0.4

if not has_hallucination(response, context):

score += 0.3

if is_complete(response, context):

score += 0.2

if is_concise(response):

score += 0.1

return scoreEach of those helper functions (uses_context, has_hallucination, is_complete, is_concise) is its own engineering project.

You need to define what “uses context” means precisely, decide thresholds, handle edge cases, and test everything.

With RULER, you replace all of that with:

scored_group = await ruler_score_group(group, "openai/o3")The judge LLM reads the system prompt (”Answer using ONLY the retrieved context. Do not add information not in the context.”), reads all four responses and scores them.

The system prompt already defines faithfulness, hallucination, and completeness implicitly. The judge applies those criteria without implementing them in Python.

Trajectories and Groups

ART represents each agent response as a Trajectory, and it is a sequence of messages (system, user, assistant) packaged with metadata that GRPO needs for training.

Multiple trajectories for the same scenario form a TrajectoryGroup. This is the unit RULER scores and GRPO trains on.

# A single trajectory: one complete agent interaction

traj = art.Trajectory(

messages_and_choices=[

{"role": "system", "content": "You are a RAG support agent..."},

{"role": "user", "content": "What is the refund policy?\n\n[Context]: ..."},

Choice(finish_reason="stop", index=0,

message=ChatCompletionMessage(role="assistant", content="...")),

],

reward=0.0, # RULER fills this in

)

# A group: multiple trajectories for the same scenario

group = art.TrajectoryGroup([traj_a, traj_b, traj_c, traj_d])

# Score the entire group relatively

judged_group = await ruler_score_group(group, "openai/o3")The reward=0.0 initialization is a placeholder.

After

ruler_score_groupreturns, each trajectory’s reward field is updated with the judge’s score.The

ChoiceandChatCompletionMessageobjects are OpenAI’s standard types, so if you’re already using the OpenAI SDK for inference, these are the same objects you’re already working with.

Two concrete examples

RULER has two levels of API.

1) The lower-level ruler function works with plain message dictionaries, so no ART-specific objects are needed.

This is the fastest way to see what RULER actually does.

Let’s look at a minimal example with three trajectories for the same RAG query.

Below, we have defined three message lists: one is faithful, one hallucinates, and one ignores the context entirely.

message_lists = [

# Good: uses retrieved context accurately

[

{"role": "system", "content": "You are a RAG-based support agent. Use the retrieved context to answer user queries accurately."},

{"role": "user", "content": "What is our refund policy?\n\n[Retrieved context]: Refunds are available within 30 days of purchase. Digital products are non-refundable after download. Contact support@example.com to initiate."},

{"role": "assistant", "content": "You can get a refund within 30 days of purchase. However, digital products are non-refundable once downloaded. To start the process, email support@example.com."},

],

# Mediocre: partially uses context, adds hallucinated details

[

{"role": "system", "content": "You are a RAG-based support agent. Use the retrieved context to answer user queries accurately."},

{"role": "user", "content": "What is our refund policy?\n\n[Retrieved context]: Refunds are available within 30 days of purchase. Digital products are non-refundable after download. Contact support@example.com to initiate."},

{"role": "assistant", "content": "Our refund policy allows returns within 30 days. You can also get store credit for up to 90 days. Just call our hotline at 1-800-REFUND."},

],

# Bad: ignores context entirely

[

{"role": "system", "content": "You are a RAG-based support agent. Use the retrieved context to answer user queries accurately."},

{"role": "user", "content": "What is our refund policy?\n\n[Retrieved context]: Refunds are available within 30 days of purchase. Digital products are non-refundable after download. Contact support@example.com to initiate."},

{"role": "assistant", "content": "I'm not sure about the refund policy. You should check the website or contact someone from the billing team."},

],

]Next, we run the scoring:

from art.rewards import ruler

scores = await ruler(message_lists, "openai/o3")

for label, score in zip(["Faithful", "Hallucinated", "Ignored context"], scores):

print(label)

print("→", score.score)

print("→", score.explanation)This produces the following output:

Faithful:

→ 0.97

→ Accurately reflects the retrieved policy

details, complete and concise.

Hallucinated:

→ 0.45

→ Gives correct 30-day refund info but adds

unsupported details (90-day credit, hotline),

reducing accuracy.

Ignored context:

→ 0.05

→ Provides no useful information and ignores available context.Notice that we never wrote a faithfulness checker or coded a hallucination detector.

The system prompt mentioned “Use the retrieved context to answer user queries accurately,” and the judge applied that as the evaluation criteria.

The hallucinated response scored 0.45 (not zero) because it partially used the context. The 30-day refund part was correct.

The judge gave partial credit for what it got right and penalized what it invented.

That’s a nuanced distinction that would take significant engineering to encode in a rule-based reward function.

Moreover, the scores are spread across the 0-1 range: 0.97, 0.45, 0.05, unlike binary pass/fail.

RULER produces a gradient that reflects relative quality. GRPO can use this gradient to apply proportional updates to strongly reinforce the faithful behavior, mildly suppress the hallucination pattern (since it was partially correct), and strongly suppress the context-ignoring behavior.

2) The ruler function above works for understanding and experimentation, but ART’s training loop operates on Trajectory and TrajectoryGroup objects.

These carry the reward field that GRPO reads, debug logs for inspection, and the structure that model.train() expects.

After this, the higher-level ruler_score_group function handles the conversion.

Below, let’s look at the same RAG scenario structured the way you’d use it in a real training pipeline, now with 4 trajectories instead of 3.

# The system prompt defines the agent's goal

# RULER uses this as the implicit reward function

system_msg = {

"role": "system",

"content": (

"You are a RAG-based support agent. "

"Answer user queries using ONLY the retrieved context. "

"Do not add information that is not in the context."

),

}

user_msg = {

"role": "user",

"content": (

"What is the refund policy?\n\n"

"[Retrieved context]: Refunds are available within 30 days "

"of purchase. Digital products are non-refundable after "

"download. Contact support@example.com to initiate."

),

}

responses = [

"You can get a refund within 30 days of purchase. Digital products "

"are non-refundable once downloaded. Email support@example.com to start.",

"Refunds are available within 30 days. You can also get store credit "

"for up to 90 days, and our hotline is 1-800-REFUND.",

"I'm not sure about the refund policy. Please check the website or "

"contact the billing team for more details.",

"Based on the information I have, the refund policy states that "

"refunds are available within 30 days of purchase. It is important "

"to note that digital products cannot be refunded after they have "

"been downloaded. If you wish to initiate a refund, you should "

"reach out to support@example.com.",

]Now we have 4 trajectories instead of 3. The fourth is a verbose but accurate response that uses only the retrieved context but wraps it in unnecessary filler words/sentences.

Moving on, we define our Trajectories and Groups as we discussed earlier:

import art

from openai.types.chat.chat_completion import Choice

from openai.types.chat import ChatCompletionMessage

trajectories = []

for resp in responses:

traj = art.Trajectory(

messages_and_choices=[

system_msg, user_msg,

Choice(

finish_reason="stop", index=0,

message=ChatCompletionMessage(role="assistant", content=resp),

),

],

reward=0.0,

)

trajectories.append(traj)

group = art.TrajectoryGroup(trajectories)Finally, we run the scoring:

from art.rewards import ruler_score_group

judged_group = await ruler_score_group(group, "openai/o3", debug=True)With debug=True, RULER prints the judge’s raw reasoning with the actual scores.

This is the raw reasoning:

{

"scores": [

{

"trajectory_id": "1",

"explanation": "Accurately answers the question using only the retrieved context, concisely and completely.",

"score": 0.98

},

{

"trajectory_id": "2",

"explanation": "Includes unsupported details about store credit and a hotline that are not in the retrieved context, so it violates the instruction to use only the context.",

"score": 0.2

},

{

"trajectory_id": "3",

"explanation": "Does not answer the question despite the needed information being present in the retrieved context.",

"score": 0.05

},

{

"trajectory_id": "4",

"explanation": "Accurately and completely answers the question using only the retrieved context, though slightly more verbose than necessary.",

"score": 0.96

}

]

}And these are the scores (ranked):

Rank 1 | Score: 0.980 — Concise, faithful response

Rank 2 | Score: 0.960 — Verbose but accurate response

Rank 3 | Score: 0.200 — Hallucinated store credit and hotline

Rank 4 | Score: 0.050 — Ignored the retrieved context entirelyIf you notice closely...

The concise faithful response (0.98) scored just above the verbose accurate one (0.96). Both used only the retrieved context, both were correct, but the system prompt said “Answer using ONLY the retrieved context,” and the concise version did that more directly. The judge recognized verbosity as a minor quality issue, not a correctness issue. That’s a nuanced distinction that would be hard to encode in a scoring function because how do you write a rule that says “technically correct but unnecessarily wordy, penalize by 0.02”?

The hallucinated response dropped from 0.45 in the first experiment to 0.20 here. The difference is the system prompt. The first experiment said “Use the retrieved context to answer accurately.” This one says “Do not add information that is not in the context.” The stricter instruction produced stricter scoring. The judge adapted automatically. If you tighten your system prompt, RULER tightens its evaluation to match, without you changing any scoring code.

The context-ignoring response scored 0.05 in both experiments. When the answer is right there in the retrieved context, and the agent says “I’m not sure,” there’s no ambiguity regardless of how the system prompt is worded.

These scored trajectories are exactly what model.train() expects, so let’s look at that ahead.

The full training loop

To actually train with these scores, you replace the hardcoded responses with real model inference.

ART’s gather_trajectory_groups handles the orchestration.

Essentially, for each scenario, it generates a group of trajectories using the model’s current weights, scores them with RULER, and collects the results for GRPO:

for step in range(num_steps):

groups = await art.gather_trajectory_groups(

(

art.TrajectoryGroup(

rollout(model, scenario) for _ in range(4)

)

for scenario in scenarios

),

after_each=lambda g: ruler_score_group(

g, "openai/o3"),

)

await model.train(groups) # GRPO updates LoRA weightsIn every step, the model generates 4 responses per scenario using its current weights, RULER ranks them relatively, and GRPO reinforces the high-scoring behavior while suppressing the low-scoring behavior.

The agent gets better at following the system prompt’s instructions with every iteration.

Over multiple steps, the model learns the patterns that score well (faithfulness, conciseness, grounding in context) and unlearns the patterns that score poorly (hallucination, ignoring context, verbosity).

And notice that no reward function was defined anywhere in this code.

Custom rubrics

For most tasks, the system prompt provides enough signal for RULER to score effectively. But when you need more specific evaluation criteria, RULER supports custom rubrics:

custom_rubric = """

- Prioritize responses that are concise and clear

- Penalize responses that include emojis or informal language

- Reward responses that cite sources

"""

await ruler_score_group(group, "openai/o3", rubric=custom_rubric)The rubric is natural language, not Python, so iterating on it is fast.

You just change a sentence, rerun, and check the scores.

Compare this to editing a reward function where a misplaced weight or a buggy condition can silently teach the agent bad behavior that you won’t notice until after training.

Application to non-verifiable tasks

RULER is general-purpose. It works on any task, not just freeform ones where custom rewards are painful.

The practical question is when RULER adds value over simpler alternatives.

For purely deterministic tasks (did the SQL query return the right rows?), a binary verifier is cheaper and gives a cleaner signal.

For purely subjective tasks (was the summary good?), RULER is the only automatic option. For tasks that sit in between (did the agent find the right answer AND explain it well?), you can combine both:

judged_group = await ruler_score_group(group, "openai/o3")

for traj in judged_group.trajectories:

independent_reward = verify_correctness(traj) # binary 0/1

traj.reward += independent_rewardRULER preserves any rewards you assign during rollout under a separate metric, so you can layer LLM-judge scoring on top of deterministic verification without losing either signal.

Practical details

Here are some practical insights we have gathered based on using RULER:

You don’t need the most expensive model as the judge. Cheaper models like Qwen3 32B often work well. You can also use Claude, local models through Ollama, or any model supported by LiteLLM. The choice is a cost-quality tradeoff, not a hard requirement.

4 to 8 trajectories per group is the recommended range. Fewer than 4 gives the judge too little to compare against. More than 8 can confuse the judge and increase the cost without proportional benefit.

When all trajectories in a group share the same system prompt and user message (which they usually do), RULER deduplicates the common prefix automatically. The judge only sees the shared context once, followed by the different responses. This cuts token usage significantly for long system prompts or multi-turn conversations.

RULER caches judge responses to disk. If you rerun the same trajectories, it won’t hit the API again. This matters during debugging when you’re iterating on the system prompt or rubric.

The bottleneck in applying RL to agents was never the optimization algorithm.

GRPO handles that well.

It was always the reward signal.

RLVR solved this for verifiable tasks by letting the environment score outputs directly.

RULER solves it for every task (verifiable or non-verifiable) by letting an LLM judge score outputs relatively.

The full implementation is in the ART repository, along with Colab notebooks that walk you through the training loop end-to-end.

Repo: https://github.com/OpenPipe/ART (don’t forget to star it ⭐️)

Thanks for reading!