MCP & Skills for AI agents

...explained visually!

DailyDoseofDS is now on Instagram!

This newsletter regularly breaks down RAG architectures, AI agents, LLM internals, and everything in between.

Now we’re bringing all of that to Instagram too, in a format that’s quick to consume and hard to ignore.

We’re already 240 posts deep with content on RAG vs HyDE, agentic RAG, specialized AI models, prompt techniques, Bayesian optimization, active learning, and a lot more.

You can find the account and follow it here →

MCP & Skills for AI agents

MCP and Skills aren’t the same thing!

Conflating them is one of the most common mistakes we see when people start building AI agents seriously.

This visual explains how they work under the hood!

Let’s break both down from scratch!

We covered all these details (with implementations) in the MCP course.

It covers fundamentals, architecture, context management, JSON-RPC communication, building a fully custom and local MCP client, tools, resources, and prompts, Sampling, Testing, Security and sandboxing in MCP, integration with most widely used Agentic frameworks like LangGraph, LlamaIndex, CrewAI, and PydanticAI, and more.

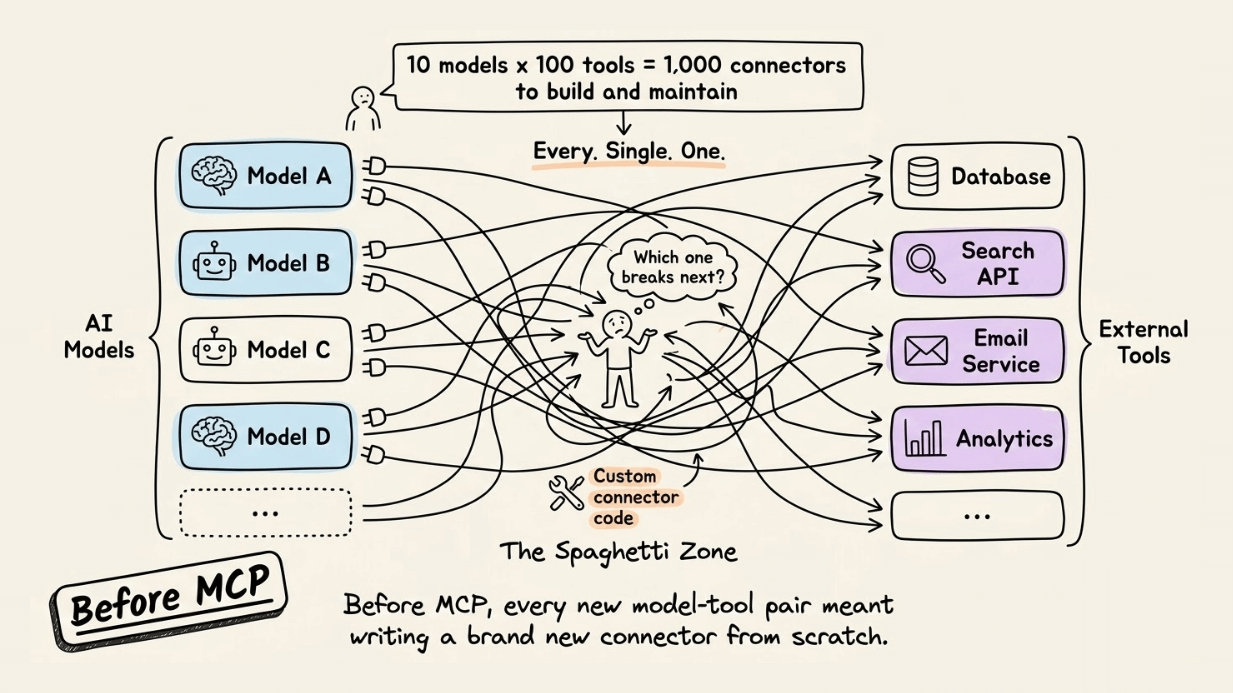

Before MCP existed, connecting an AI model to an external tool meant writing custom integration code every single time.

For instance, 10 models and 100 tools led to 1,000 unique connectors to build and maintain.

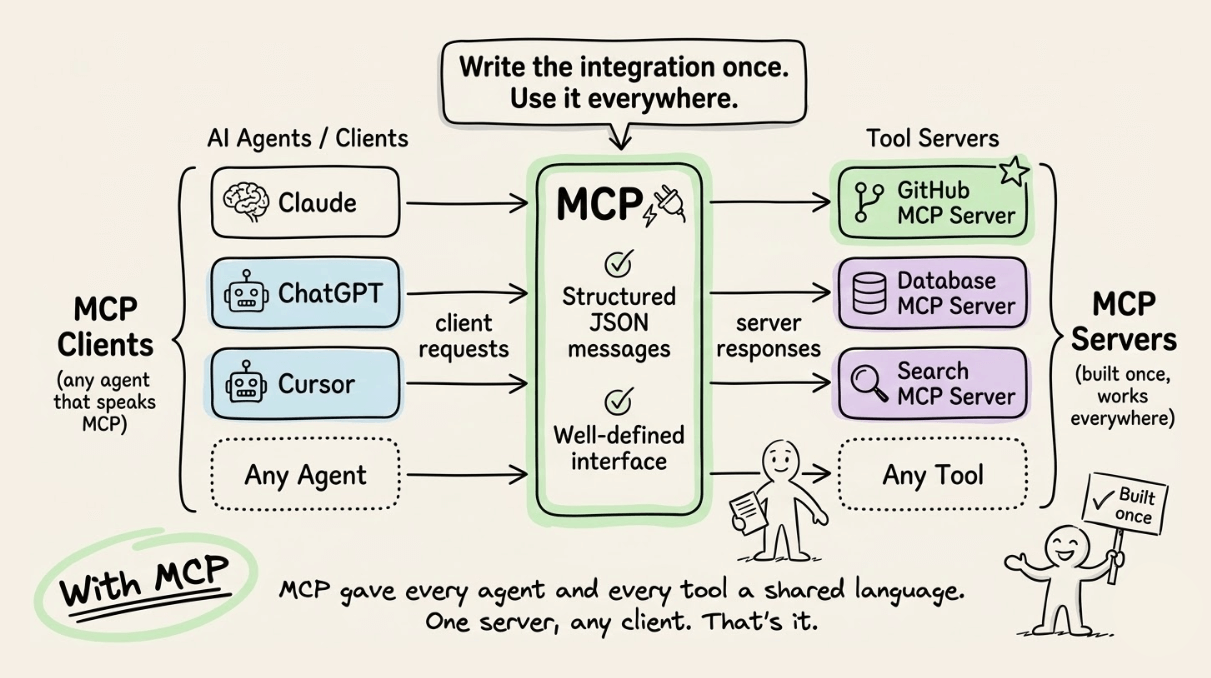

MCP fixed this with a shared communication standard.

Every tool became a “server” that exposed its capabilities. Every AI agent became a “client” that knew how to ask. They talked through structured JSON messages over a clean, well-defined interface.

For instance, one could build a GitHub MCP server once, and it worked with Claude, ChatGPT, Cursor, or any other agent that spoke MCP. That’s the core value: write the integration once, use it everywhere.

But here’s where most explanations stop short.

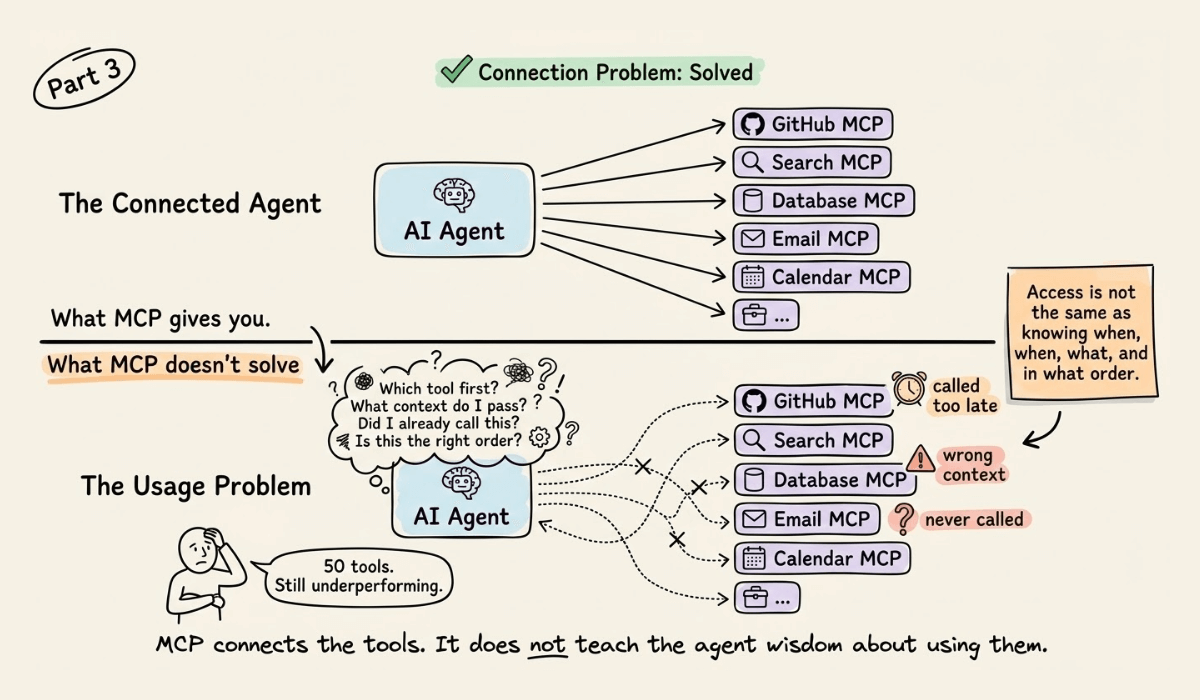

MCP solved the connection problem. But it did not solve the usage problem.

This means you can hand an agent 50 perfectly wired MCP tools, and it can still underperform if it doesn’t know when to call which tool, in what order, and with what context.

That’s the gap Skill intends to fill.

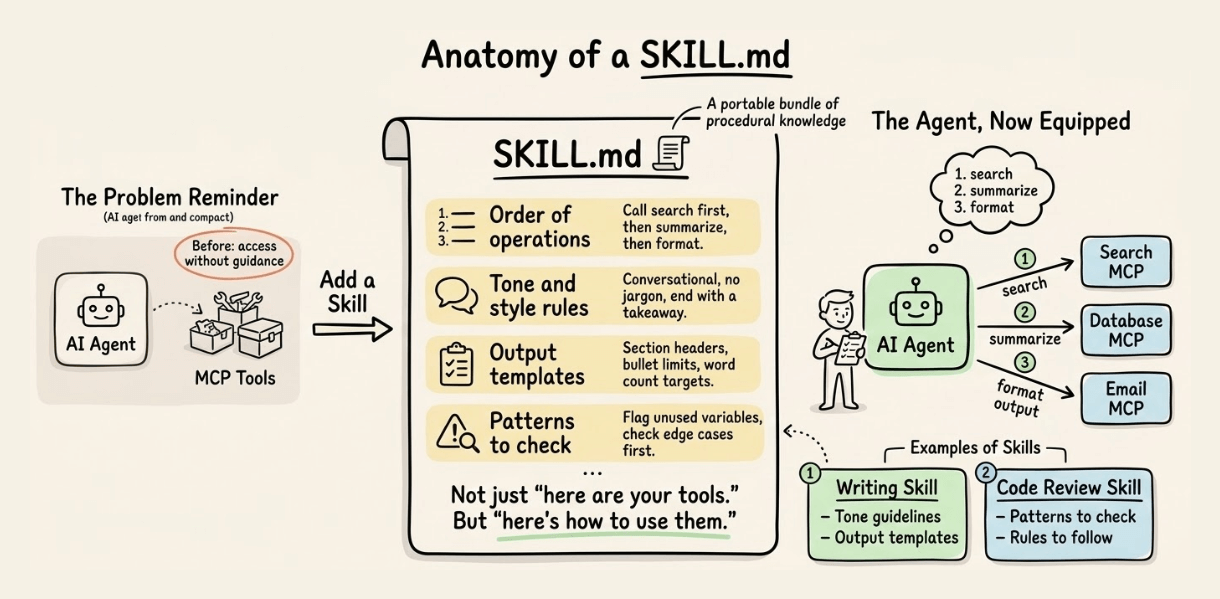

A Skill is a portable bundle of procedural knowledge. Think of a SKILL.md file that tells an agent not just “here are your tools” but “here’s how to use them for this specific task.” A writing skill bundles tone guidelines and output templates. A code review skill bundles patterns to check and rules to follow.

MCP gives the agent a hand. Skills give it muscle memory.

Together, they form the full capability stack for a production AI agent:

MCP handles tool connectivity (the wiring layer)

Skills handle task execution (the knowledge layer)

The agent orchestrates both using its context and reasoning

This is why advanced agent setups increasingly ship both: MCP servers for integrations and SKILL.md files for domain expertise.

If you’re building with agents, skills.sh is a repository of 85k+ skills that you can use with any agent.

Also, we covered all these details (with implementations) in the MCP course.

Part 1 covered fundamentals, architecture, context management, etc. →

Part 2 covered core capabilities, JSON-RPC communication, etc. →

Part 4 built a full-fledged MCP workflow using tools, resources, and prompts →

Part 5 taught how to integrate Sampling into MCP workflows →

Part 6 covered testing, security, and sandboxing in MCP Workflows →

Part 7 covered testing, security, and sandboxing in MCP Workflows →

Part 8 integrated MCPs with the most widely used agentic frameworks: LangGraph, LlamaIndex, CrewAI, and PydanticAI →

Part 9 covered using LangGraph MCP workflows to build a comprehensive real-world use case→

Thanks for reading!