Reinforcement Learning Nanodegree

...covered with implementation.

Today, we’re launching a brand new hands-on series on reinforcement learning, built from the ground up.

Read Part 1 of the Reinforcement Learning course here →

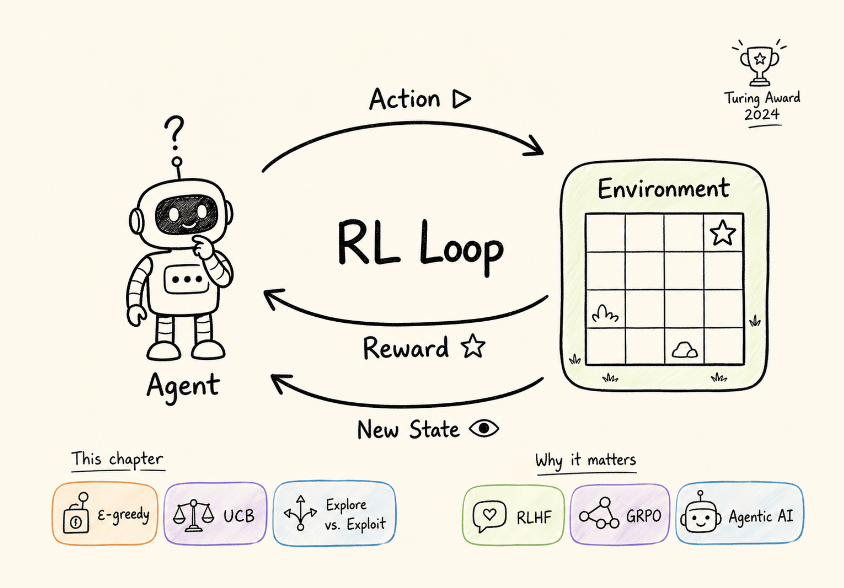

This first chapter covers:

what makes RL fundamentally different from supervised and unsupervised learning

the agent-environment interaction loop

the exploration-exploitation tradeoff

multi-armed bandits as the simplest RL setting, four action-selection strategies (greedy, ε-greedy, optimistic initialization, UCB)

and a complete hands-on implementation of the classic 10-armed testbed with results and analysis.

Read Part 1 of the Reinforcement Learning course here →

Why care?

Look at what has happened in the past two years.

DeepSeek-R1 used GRPO for reasoning.

ChatGPT was shaped by RLHF.

Claude uses constitutional AI with RL.

Every frontier LLM released recently has some form of reinforcement learning in its post-training pipeline.

RL is no longer a niche subfield for robotics and game-playing. It is a core component of how the most capable AI systems are built today.

Google Trends reflects this.

Search interest for “reinforcement learning” was nearly flat from 2004 to 2024. In the past year, it has gone vertical, hitting an all-time high.

The demand for RL expertise has followed.

If you look at ML engineering roles at labs like OpenAI, Anthropic, DeepMind, or any team working on post-training, alignment, or agentic systems, RL fluency shows up as a requirement consistently.

Understanding how reward signals shape model behavior, how policy optimization works, and how exploration interacts with credit assignment is becoming as fundamental as understanding backpropagation was five years ago.

This series is structured the same way as our MLOps/LLMOps course: concept by concept, with clear explanations, diagrams, math where it matters, and hands-on implementations you can run.

And no prior RL background is needed.

You can start reading Part 1 here →

Over to you: What topics would you like us to cover in this RL series?

Thanks for reading!