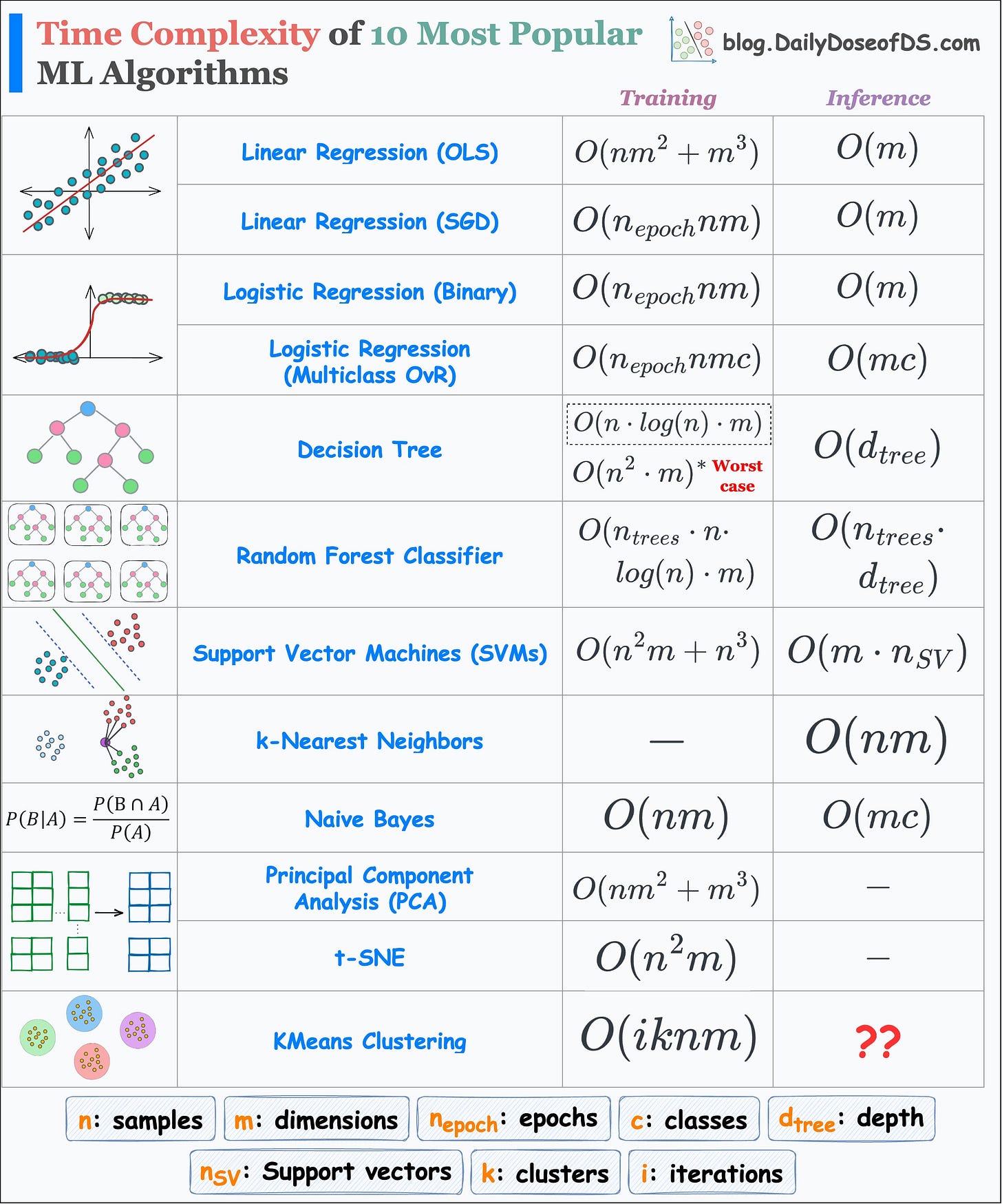

Training and Inference Time Complexity of 10 ML Algorithms

...in a single frame.

I read a report about code reviews recently, and things don’t look good.

Data suggests that ~80% of developers wait more than one day for a review on their pull request (and this is irrespective of team sizes as shown below):

Code review is a tedious job, and teams are still doing it the same way as 5 years ago.

Sourcery is making manual code reviews obsolete with AI. Thus, every pull request instantly gets a human-like review from Sourcery with general feedback, in-line comments, and relevant suggestions.

What used to take over a day now takes a few seconds only.

Sourcery handles 500,000+ requests every month (reviews + refactoring + writing tests + documenting code, etc.) and I have been using it for over 1.5 years now.

If you care about your and your team’s productivity, get started right away:

Thanks to Sourcery for partnering with me today!

Let’s get to today’s post now.

Run-time complexity of ML algos

I prepared the following visual which depicts the run-time complexity of the 10 most popular ML algorithms.

But why even care about run time?

There are multiple reasons why I always care about run time and why you should too.

To begin, we know that everyone is a big fan of sklearn implementations.

It literally takes just two (max three) lines of code to run any ML algorithm with sklearn.

Yet, in my experience, due to this simplicity, most users often overlook:

The core understanding of an algorithm.

The data-specific conditions that allow us to use an algorithm.

For instance, it makes no sense to use PCA when you have plenty of dimensions.

This is because PCA’s run-time grows cubically with dimensions.

Similarly, you are up for a big surprise if you use SVM or t-SNE on a dataset with plenty of samples.

SVM’s run-time grows cubically with the total number of samples.

t-SNE’s run-time grows quadratically with the total number of samples.

Another advantage of figuring out the run-time is that it helps us understand how an algorithm works end-to-end.

Of course, in the above table, I have made some assumptions here and there.

For instance:

In a random forest, all decision trees may have different depths. But here, I have assumed that they are equal.

During inference in kNN, we first find the distance to all data points. This gives a list of distances of size n (total samples).

Then, we find the k-smallest distances from this list.

The run-time to determine the k-smallest values may depend on the implementation.

Sorting and selecting the k-smallest values will be

O(nlogn).But if we use a priority queue, it will take

O(nlog(k)).

In t-SNE, there’s a learning step. However, the major run-time comes from computing the pairwise similarities in the high-dimensional space. You can learn how t-SNE works here: tSNE article.

Nonetheless, the table still accurately reflects the general run-time of each of these algorithms.

Today, as an exercise, I would encourage you to derive these run-time complexities yourself.

This activity will provide you so much confidence in algorithmic understanding.

If you get stuck, let me know.

👉 Over to you: Can you tell the inference run-time of KMeans Clustering?

Thanks for reading!

Are you preparing for ML/DS interviews or want to upskill at your current job?

Every week, I publish in-depth ML deep dives. The topics align with the practical skills that typical ML/DS roles demand.

Join below to unlock all full articles:

Here are some of the top articles:

[FREE] A Beginner-friendly and Comprehensive Deep Dive on Vector Databases.

Understanding LoRA-derived Techniques for Optimal LLM Fine-tuning

8 Fatal (Yet Non-obvious) Pitfalls and Cautionary Measures in Data Science.

5 Must-Know Ways to Test ML Models in Production (Implementation Included).

A Detailed and Beginner-Friendly Introduction to PyTorch Lightning: The Supercharged PyTorch

Don’t Stop at Pandas and Sklearn! Get Started with Spark DataFrames and Big Data ML using PySpark.

Federated Learning: A Critical Step Towards Privacy-Preserving Machine Learning.

You Cannot Build Large Data Projects Until You Learn Data Version Control!

Sklearn Models are Not Deployment Friendly! Supercharge Them With Tensor Computations.

Join below to unlock all full articles:

👉 If you love reading this newsletter, share it with friends!

👉 Tell the world what makes this newsletter special for you by leaving a review here :)