Why Prefer Mahalanobis Distance Over Euclidean distance?

Euclidean distance is not always an ideal choice.

During distance calculation, Euclidean distance assumes independent axes.

Thus, Euclidean distance will produce misleading results if your features are correlated.

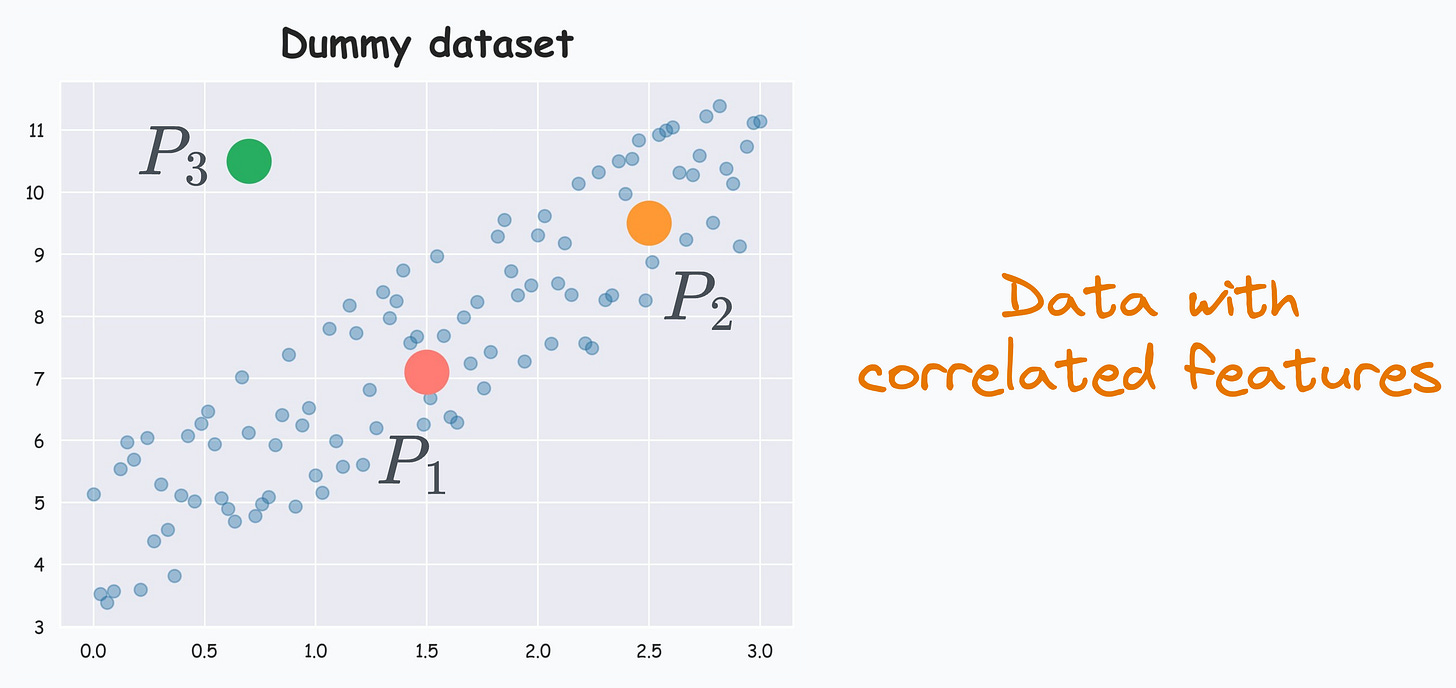

For instance, consider this dummy dataset below:

Clearly, the features are correlated.

Consider three points marked P1, P2, and P3 in this dataset.

Considering the data distribution, something tells us that P2 is closer to P1 than P3. This is because P2 lies more within the data distribution than P3.

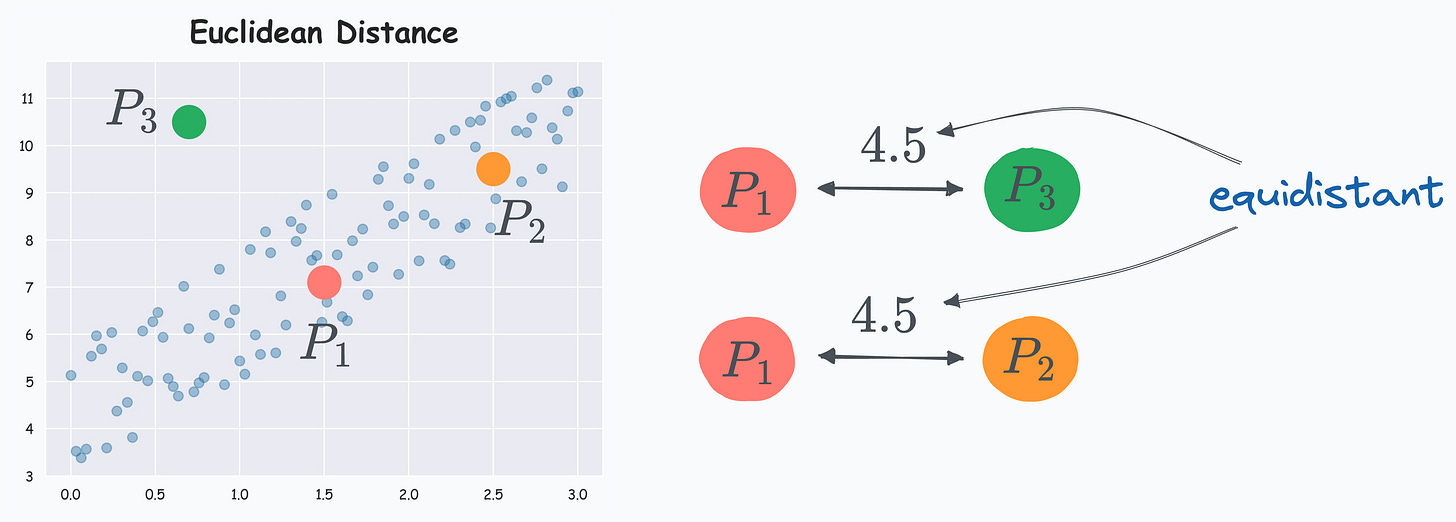

Yet, Euclidean distance ignores this, and P2 and P3 come out to be equidistant to P1, as depicted below:

Mahalanobis distance addresses this limitation.

It is a distance metric that takes into account the data distribution.

As a result, it can measure how far away a data point is from the distribution, which Euclidean can not.

Referring to the earlier dataset again, with Mahalanobis distance, P2 comes out to be closer to P1 than P3.

How does it work?

The core idea behind Mahalanobis distance is similar to what we do in Principal Component Analysis (PCA).

We also discussed in detail here: Formulating the Principal Component Analysis (PCA) Algorithm From Scratch.

In a gist, the objective is to construct a new coordinate system with independent and orthogonal axes.

The steps are:

Step 1: Transform the columns into uncorrelated variables.

Step 2: Scale the new variables to make their variance equal to 1.

Step 3: Find the Euclidean distance in this new coordinate system.

So, eventually, we do use Euclidean distance.

However, we first transform the data to ensure that it obeys the assumptions of Euclidean distance.

Uses

One of the most common use cases of Mahalanobis distance is outlier detection.

Reconsidering the above dataset, P3 is clearly an outlier:

If we consider P1 as the distribution’s centroid and use Euclidean distance, we will infer that P3 is not an outlier as both P2 and P3 are equidistant to P1.

Using Mahalanobis distance, however, provides a clearer picture:

This becomes more useful in a high-dimensional setting where visualization is infeasible.

Another use case we typically do not hear of often, but that exists is a variant of kNN that is implemented with Mahalanobis distance instead.

Scipy implements the Mahalanobis distance, which you can check here: Mahalanobis distance Scipy docs.

👉 Over to you: What are some other limitations of Euclidean distance?

Whenever you are ready, here’s one more way I can help you:

Every week, I publish 1-2 in-depth deep dives (typically 20+ mins long). Here are some of the latest ones that you will surely like:

[FREE] A Beginner-friendly and Comprehensive Deep Dive on Vector Databases.

A Detailed and Beginner-Friendly Introduction to PyTorch Lightning: The Supercharged PyTorch

You Are Probably Building Inconsistent Classification Models Without Even Realizing

Why Sklearn’s Logistic Regression Has no Learning Rate Hyperparameter?

PyTorch Models Are Not Deployment-Friendly! Supercharge Them With TorchScript.

Federated Learning: A Critical Step Towards Privacy-Preserving Machine Learning.

You Cannot Build Large Data Projects Until You Learn Data Version Control!

To receive all full articles and support the Daily Dose of Data Science, consider subscribing:

👉 If you love reading this newsletter, feel free to share it with friends!

👉 Tell the world what makes this newsletter special for you by leaving a review here :)