I have been using FireDucks quite extensively lately.

For starters, FireDucks is a heavily optimized alternative to Pandas with exactly the same API as Pandas.

All you need to do is replace the Pandas import with the FireDucks import. That’s it.

The db-benchmark includes scenarios that execute fundamental data science operations across multiple datasets. FireDucks appears to be the fastest DataFrame library for common big data operations under this benchmark:

Moreover, as per TPC-H benchmarks across 22 queries:

Modin had an average speed-up of 1.0x over Pandas.

Polars had an average speed-up of 57x over Pandas.

But FireDucks had an average speed-up of 125x over Pandas.

A demo of this speed-up comparison between DuckDB, Pandas and Polars is shown in the video above.

At its core, FireDucks is heavily driven by lazy execution, unlike Pandas, which executes right away.

This allows FireDucks to build a logical execution plan and apply possible optimizations.

How to use FireDucks?

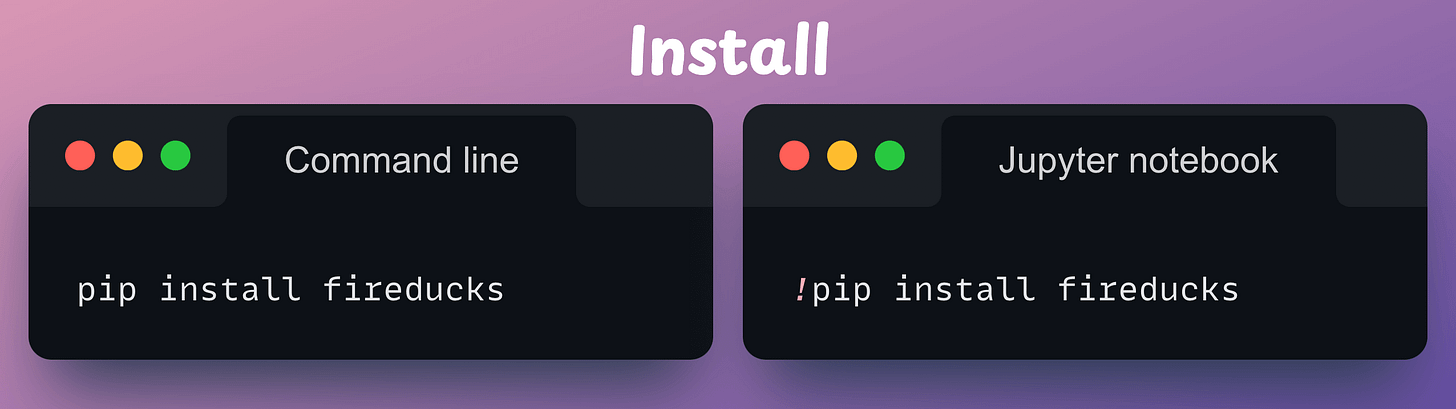

First, install the library:

Next, there are three ways to use it:

If you are using IPython or Jupyter Notebook, load the extension as follows:

Additionally, FireDucks also provides a pandas-like module (

fireducks.pandas), which can be imported instead of using Pandas. Thus, to use FireDucks in an existing Pandas pipeline, replace the standard import statement with the one from FireDucks:

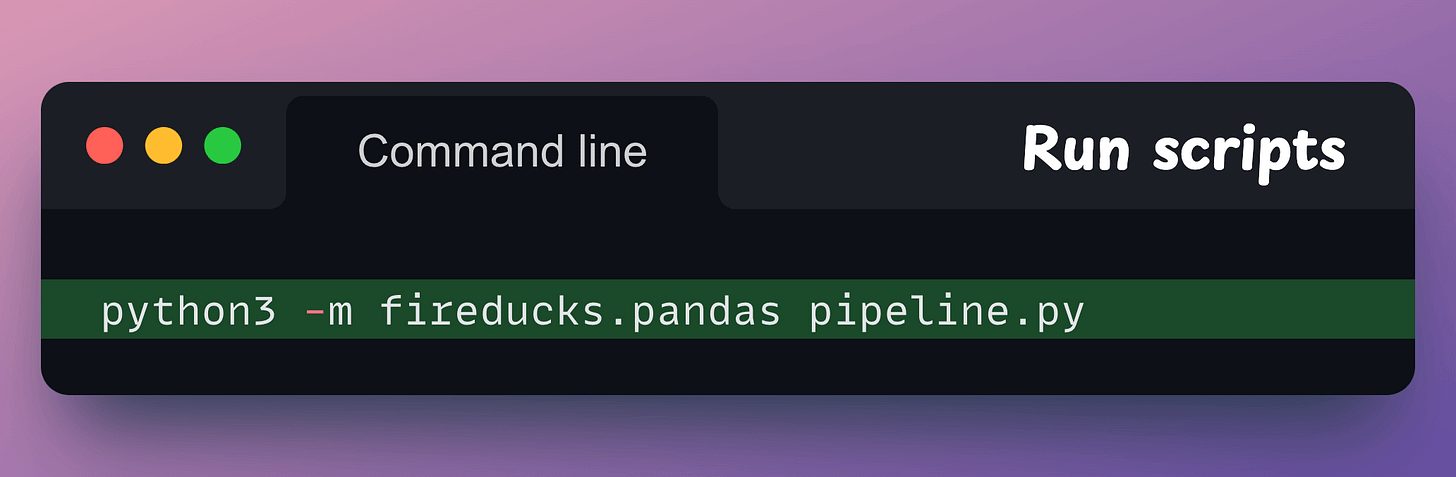

Lastly, if you have a Python script, executing it as shown below will automatically replace the Pandas import statement with FireDucks:

Done!

It’s that simple to use FireDucks.

The code for the above benchmarks is available in this colab notebook.

👉 Over to you: What are some other ways to accelerate Pandas operations in general?

P.S. For those wanting to develop “Industry ML” expertise:

At the end of the day, all businesses care about impact. That’s it!

Can you reduce costs?

Drive revenue?

Can you scale ML models?

Predict trends before they happen?

We have discussed several other topics (with implementations) in the past that align with such topics.

Here are some of them:

Learn sophisticated graph architectures and how to train them on graph data: A Crash Course on Graph Neural Networks – Part 1.

So many real-world NLP systems rely on pairwise context scoring. Learn scalable approaches here: Bi-encoders and Cross-encoders for Sentence Pair Similarity Scoring – Part 1.

Learn techniques to run large models on small devices: Quantization: Optimize ML Models to Run Them on Tiny Hardware.

Learn how to generate prediction intervals or sets with strong statistical guarantees for increasing trust: Conformal Predictions: Build Confidence in Your ML Model’s Predictions.

Learn how to identify causal relationships and answer business questions: A Crash Course on Causality – Part 1

Learn how to scale ML model training: A Practical Guide to Scaling ML Model Training.

Learn techniques to reliably roll out new models in production: 5 Must-Know Ways to Test ML Models in Production (Implementation Included)

Learn how to build privacy-first ML systems: Federated Learning: A Critical Step Towards Privacy-Preserving Machine Learning.

Learn how to compress ML models and reduce costs: Model Compression: A Critical Step Towards Efficient Machine Learning.

All these resources will help you cultivate key skills that businesses and companies care about the most.